Computers Will Soon Read Your Mind

Technology will help patients suffering from ALS or strokes.

Originally published in The Wall Street Journal on December 14, 2023.

It’s been almost a century since psychiatrist Hans Berger made the first electroencephalogram, providing a glimpse into the electric nature of the human brain. EEG readings have helped countless people struggling to recover from ailments ranging from epilepsy and sleep disorders to head injuries and brain tumors. Technology has come a long way since then, and artificial intelligence may soon give us a new brain technology revolution, with advances in the treatment of ALS, strokes and other conditions.

As a teenager in a mentorship program, I decided to study the brain after watching a neurosurgeon implant an electrode deep into the brain of a patient with Parkinson’s whose tremors were making it impossible for her to hold a pen or drink from a cup. The surgeon implanted the electrode — designed to deliver the right amount of electricity to the exact part of the brain responsible for the tremors — and awoke the patient, her skull still open, to adjust the implant’s settings. A few turns of a dial and the shaking stopped. Her tremors were cured.

While the discovery of EEG signals was revolutionary, they can be noisy and difficult to interpret, requiring expensive equipment and controlled environments. With recent advances in sensor materials, we are approaching the point at which brain signals can be read throughout the day with comfortable and discreet wearable devices, as a Fitbit or Apple Watch measures our heart rates. Advances in computing and AI mean we could interpret these brain signals in real time.

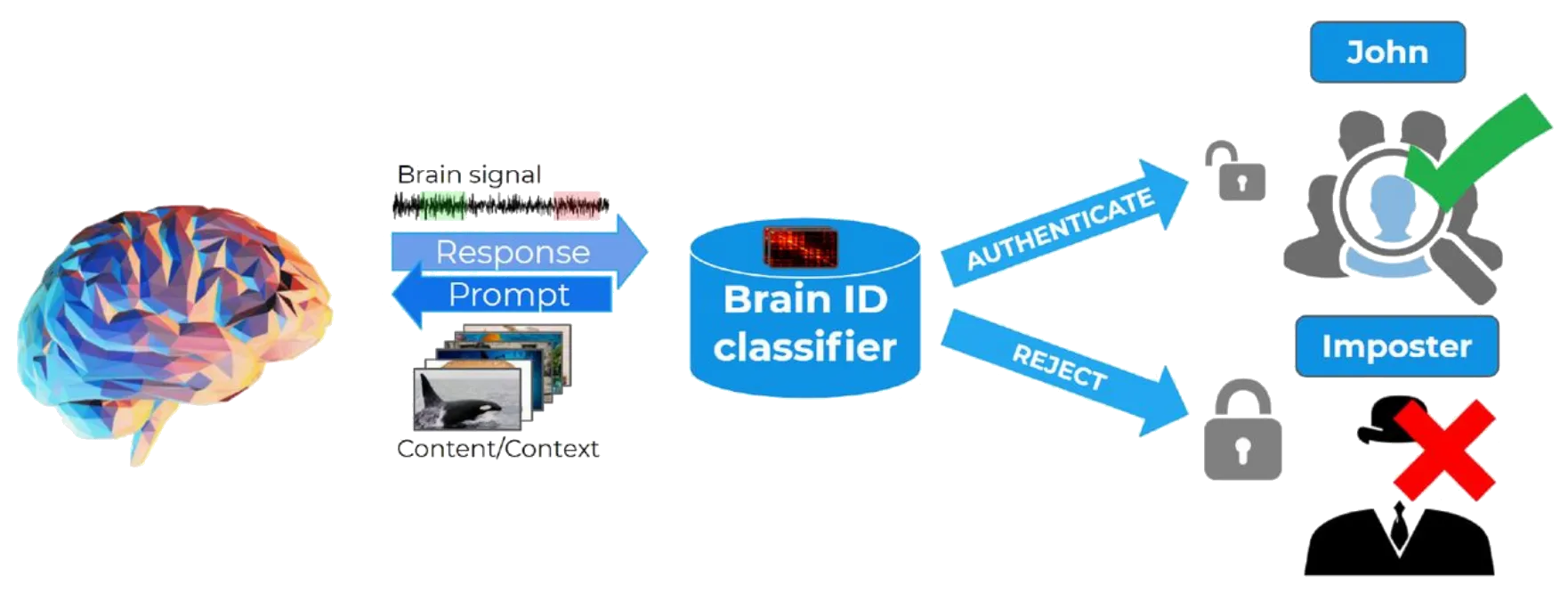

The possibilities include thought-to-speech and thought-to-movement assistive technology for ALS or paralysis patients and accelerated, customized recovery protocols for those suffering from strokes, post-traumatic stress disorder and brain trauma. Brain-computer interfaces could also help personalize teaching and training protocols to fit a learner’s cognition and memory processes, eliminate the need for usernames and passwords with a seamless “brain ID,” and enable you or a mental-health professional to monitor your emotional state throughout the day.

I was part of the team that tried to develop a brain-computer interface for the astrophysicist Stephen Hawking, who suffered from ALS. Hawking’s Intel-designed eye-tracking and cheek-click method relied on a level of muscular control that couldn’t be taken for granted given his condition. He participated in the project, as he put it, “to assist in research, encourage investment in this area, and, most importantly, to offer some future hope to people diagnosed with ALS and other neurodegenerative conditions.” He died in 2018.

Today implant-based systems are increasingly powerful and noninvasive, and wearables are improving quickly too. Many of us in the field believe we are nearing an inflection point when countless people will see the fruits of decades of research. The stakes are high. Although every new technology carries promises and risks, few are tied so intimately with who we are.

Mr. Furman is a founder and CEO of Arctop, which makes brain-decoding software.

Source: The Wall Street Journal — “Computers Will Soon Read Your Mind”