Reinforcement Learning from Brain Feedback (RLbF) for Large Language Model Improvement

A framework for post-training LLMs with continuous, involuntary EEG-derived cognitive-state signals — and a proof-of-concept platform (Isaac) that instantiates it.

This paper is available on Zenodo here.

Authors: Dan Furman*, Eitan Kay, Ben Kogan, Kuan-Jung Chiang. Affiliation: Arctop Inc.

*Corresponding Author, Email: df@arctop.com

Abstract

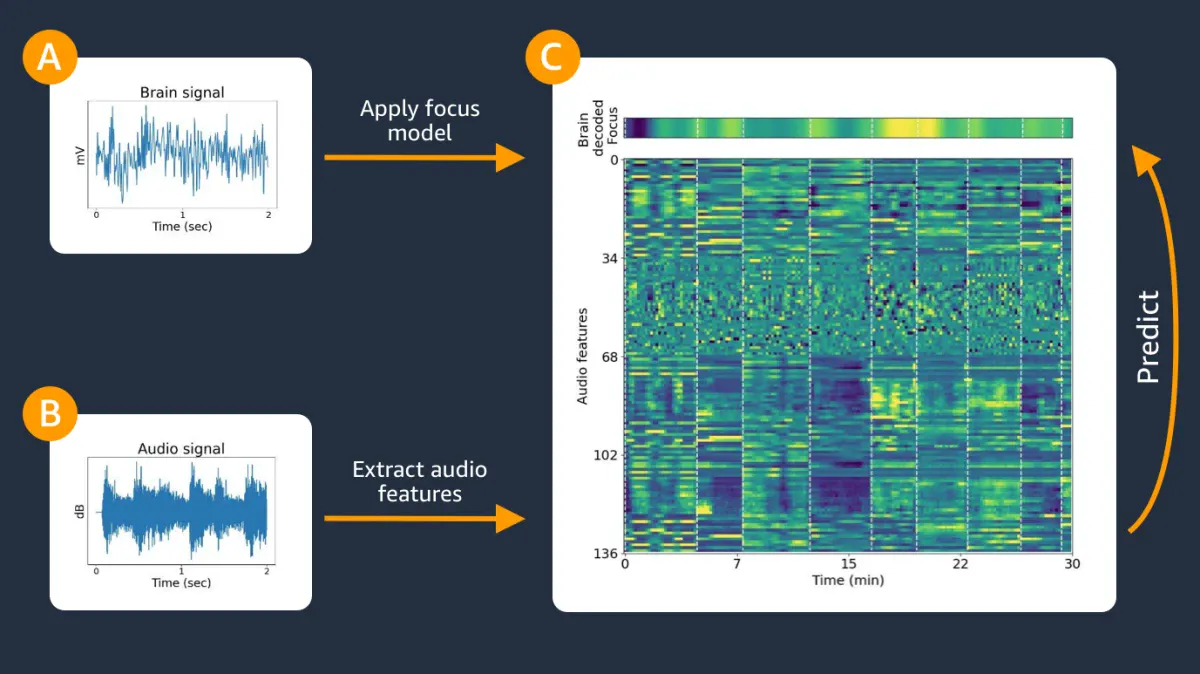

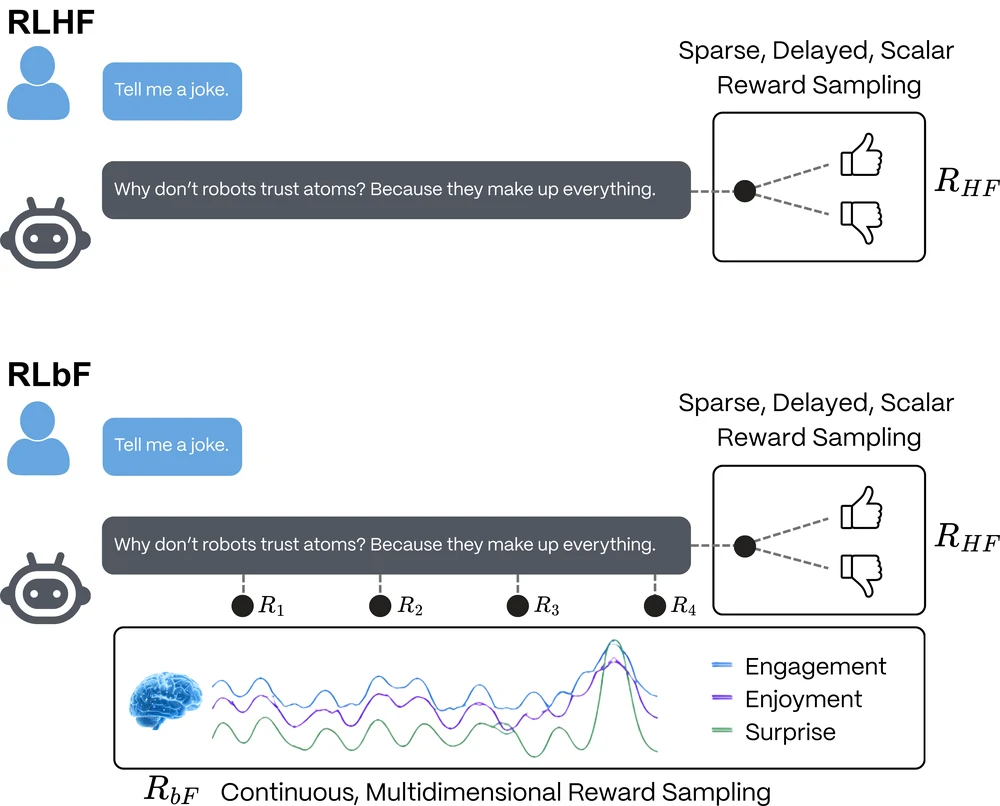

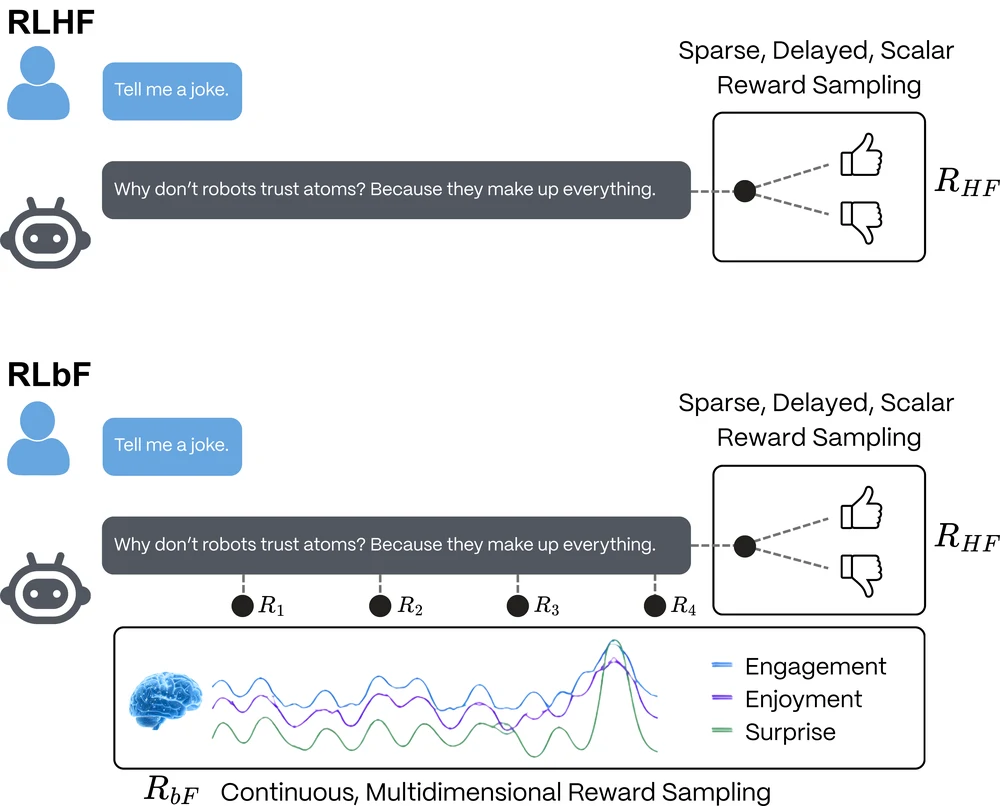

Large Language Models (LLMs) aligned using Reinforcement Learning from Human Feedback (RLHF) are learning what to say from discrete, voluntary preference judgments, but not how their communication lands. The LLM does not know how different answers affect a listener’s cognition and emotion in real time, regardless of how intelligent the model is on benchmark tests. This becomes a major gap in developing trust programmatically. This communication gap can be closed by introducing a promising signal for enriching temporal information in the RLHF training process: the brain electroencephalography (EEG) measurement. EEG’s high temporal resolution makes it especially well-suited for the purpose of contextualizing time series data, with multiple data points per second making within-response changes in listener states potentially observable.

Most EEG foundation models today have been developed as general EEG representation learners for downstream decoding tasks rather than as alignment systems for LLM models. The field still lacks large, ecologically valid datasets that couple natural conversation with time-resolved cognitive-state labels. Against that background, we propose Reinforcement Learning from Brain Feedback (RLbF), an LLM post-training framework that uses calibrated cognitive-state predictors to convert decoded EEG signals into a continuous, involuntary, noisy reward source for language-model adaptation. RLbF formalizes communication as a Partially Observable Markov Decision Process and defines a three-component reward function combining prediction accuracy, cognitive resonance, and application-layer objectives. A three-phase training pipeline progresses from supervised fine-tuning through prediction model calibration to reinforcement learning with multidimensional empathic reward.

We hypothesize that responsible RLbF deployment could also act as a data engine, with real-world conversational use generating aligned neuro-conversational traces that later support improved cognitive-state decoders and future EEG foundation models optimized for interactive environments. We hypothesize that models trained with brain-based reward signals may acquire communication skills that persist even when EEG is no longer available at inference time. If so, brain feedback could serve as a training signal for more empathic and effective language models without requiring end users to wear EEG hardware during deployment at scale. We instantiate this framework in a proof-of-concept platform, Isaac, which implements the proposed closed-loop cognitive feedback architecture for experimental study. We also outline an initial evaluation protocol designed to support pre-registered testing and to examine key ethical questions, including the boundary between empathic and persuasive computing.

”A robot may not injure a human being or, through inaction, allow a human being to come to harm.” — Isaac Asimov.

Law #1. Handbook of Robotics, 56th Edition, 2058 A.D.

1. Introduction

Large Language Models (LLMs) are now embedded in digital applications that interact with people every day, and related model classes are increasingly moving off the screen and into the ambient physical world through embodied AI and robotics. At the same time, many deployed systems remain poorly aligned with user well-being, often relying on engagement dynamics reminiscent of the “dopamine fracking” that shaped early social media platforms. As AI becomes more deeply woven into both software and the built environment, new frameworks, capabilities, and data architectures are needed to improve model behavior and enable the safe, scalable deployment of powerful AI systems.

1.1 The Problem: Language Models Cannot Sense Their Impact

Modern large language models are remarkably adept at generating fluent, informative, and contextually appropriate text. Through Reinforcement Learning from Human Feedback (RLHF), LLM outputs have been further shaped to produce responses that people tend to prefer, often increasing engagement, time spent, and repeated daily use (Ouyang et al., 2022), potentially driving dependence. Yet these models operate with a fundamental limitation: they cannot directly perceive how their words affect the person reading or listening. They have no biological grounding in human cognition and therefore no intrinsic access to the lived process of hearing language, interpreting it, and responding to it as a human does. In other words, LLMs are computational systems that can approximate human expression without sharing the underlying neural processes that produce and receive language in the brain. Similar outputs do not imply similar mechanisms, and this gap may prove to be an important frontier in the divergence between machine and human intelligence.

When a skilled human communicator explains a complex idea, they continuously monitor their listener, watching for cues such as furrowed brows that signal confusion, glazed eyes that suggest overload, nods that confirm understanding, or tension that indicates distress. These signals are often involuntary, continuous, and pre-reflective, unfolding naturally from moment to moment within a feedback loop that allows the communicator to adapt in real time: simplifying when the listener is struggling, expanding when they are engaged, and offering warmth when they are stressed. This ability to sense and respond to a communication partner’s cognitive and emotional state is the foundation of empathic communication. LLMs have no access to this bioinformational channel.

When an RLHF-trained model generates a response, it receives no direct signal about whether that response overwhelmed the user, struck the wrong emotional tone, or caused the listener to disengage partway through. The feedback available to the model is instead post hoc: voluntary, discrete, sparse, and delayed (Section 1.2 details these structural limitations). The rich, continuous, and largely involuntary signals that make human communication adaptive are therefore absent from the training loop. As a result, potentially informative traces of a user’s internal cognitive and affective state are lost, even though they may unfold in real time during the interaction. This information gap is a fundamental limitation that is unlikely to be solved simply by scaling models, improving prompting, or replacing human feedback with synthetic feedback. It is a structural property of the RLHF paradigm itself, and one that directly shapes the capabilities and limits of the models trained under it.

1.2 Relevant Background and the Missing Interface

Two research literatures are especially relevant to this problem. First is RLHF, which provides a successful post-training framework for adapting LLM behavior, and second is EEG foundation models which aim to learn reusable EEG representations for downstream decoding tasks. RLbF sits at the interface between these literatures: it requires a practical way to infer cognitive state from EEG, and a training framework capable of using those estimates to shape language-model behavior, generalize across individuals, and support real-time adaptation and personalization.

EEG foundation models. The foundation model paradigm — pretrain on large unlabeled data, then fine-tune for specific tasks — transformed natural language processing (NLP) and computer vision, and it has inspired a parallel effort in EEG. This literature matters for RLbF because any practical brain-feedback system needs a decoder that can operate across users, sessions, and recording conditions. EEG foundation models pursue that robustness by learning general EEG representations that can later be adapted to downstream tasks such as abnormal detection, event classification, sleep staging, emotion recognition, and workload estimation. Our systematic review (Section 2.4), however, identifies two limitations especially relevant here: pretrained EEG representations often transfer weakly under frozen evaluation, and the field still lacks large-scale ecologically valid datasets that couple natural conversation with time-resolved cognitive-state labels under deployment-like conditions.

Across this literature, architecture and objective design often appear to matter more than scale alone in predicting downstream performance, and classical methods remain competitive on several clinical tasks (Lotte et al., 2018). For the purposes of this paper, the key implication is that decoder quality remains an open bottleneck, while interaction-coupled cognitive-state data remain scarce.

RLHF limitations. RLHF has been successfully deployed in large-scale production assistants and has improved the helpfulness, instruction-following, and safety of modern language models. Yet the framework retains three structural limitations. First, feedback is discrete and sparse: a complex communicative exchange is often reduced to a single preference judgment, collapsing rich temporal dynamics into a coarse supervisory signal. Second, feedback is voluntary, reflective, and interface-mediated: it is filtered through conscious deliberation, comparison framing, and the mechanics of selecting which response is better, introducing annotation artifacts, inter-annotator disagreement, and social desirability bias. Third, feedback is coarse in temporal granularity: it typically cannot identify which sentence or moment within a response produced a positive or negative reaction. These limitations are intrinsic to the paradigm rather than mere artifacts of scale or implementation.

A related concern is that because human preference judgments often favor flattering or agreeable responses over more truthful ones, preference-based optimization entrenches AI sycophancy despite potential risks. Sycophantic responses can shape LLM user attitudes towards increased righteousness and reduced willingness to repair relationships (Cheng et al., 2026). While the effect of AI sycophancy on the general population’s judgments and behaviors remain an under-explored topic, it is clear that the development of effective safety guardrails is needed alongside national security countermeasures against threats from cybersocial engineering and deepfake technology.

1.3 RLbF as Cognitive-State Feedback for Post-Training

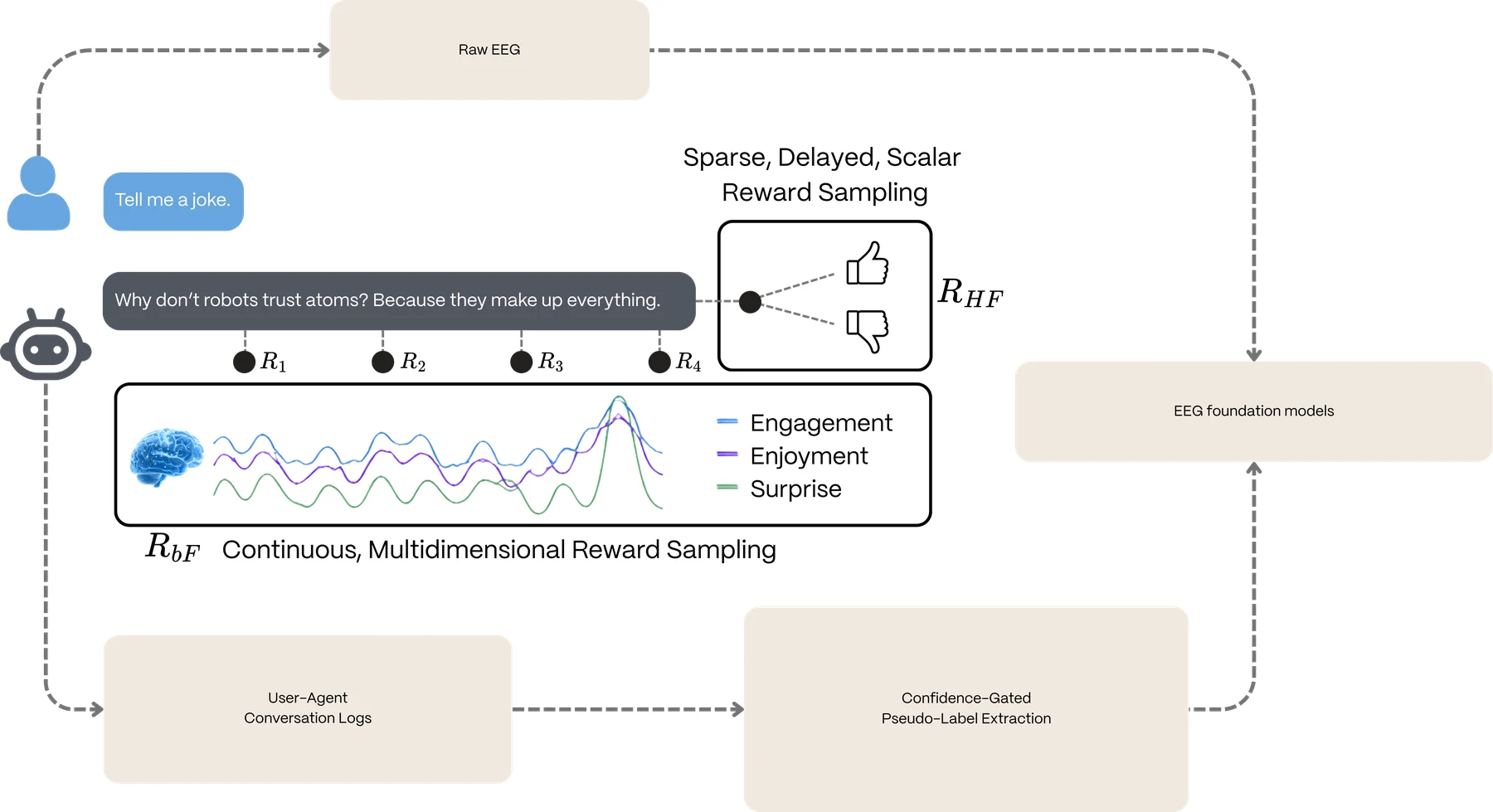

RLbF uses EEG-derived cognitive-state estimates as a feedback layer for language-model post-training. In this architecture, EEG decoding and language-model alignment are separate components: a decoder maps raw EEG into estimated cognitive states, and the language model is optimized against those estimates. This separation lets the language model benefit from improvements in EEG decoding without requiring the language model itself to process raw EEG.

This design is practical in the near term because it relies on calibrated cognitive-state predictors built with conventional supervised pipelines, while remaining fully compatible with future EEG foundation models. More importantly, this architecture establishes a self-reinforcing data engine. Responsible RLbF deployments will generate precisely what the neurotechnology field currently lacks: large-scale, ecologically valid datasets that couple continuous neural traces with conversation context, model outputs, and outcomes. By synthesizing RLHF’s post-training machinery with continuous, involuntary neurophysiological feedback, RLbF treats cognitive-state estimates as a noisy-but-informative reward signal that complements discrete preference judgments. Ultimately, interacting with the agent generates the aligned neuro-conversational logs needed to bootstrap the next generation of EEG foundation models, creating a flywheel where current deployments yield the data necessary for future decoder improvements.

1.4 Contributions

This paper makes a dual contribution to this emerging area of AI performance research: RLbF, a general theoretical framework for neuro-alignment of language models, and Isaac, a proof-of-concept platform that instantiates the framework to collect ecologically valid conversational-neurophysiological data. Together they support the following specific contributions:

- The RLbF paradigm. We formalize Reinforcement Learning from Brain Feedback as a method for LLM post-training, defining the cognitive state space, the POMDP interaction loop, the temporal delay model, and a three-component reward function (prediction accuracy, cognitive resonance, application-layer objectives) with mathematical specifications and testable hypothesis for experimentalists.

- The feedback-layer framing. We articulate an architecture in which decoded cognitive-state estimates serve as reward signals for LLM post-training, while leaving the decoder implementation open to classical models, proprietary systems, or future EEG-foundation-model-based backbones.

- The first cognitive-state-conditioned LLM pipeline. We specify a complete three-phase training pipeline – supervised fine-tuning on synthetic cognitive-state-conditioned data, calibration of cognitive-state predictors on real EEG session recordings, and reinforcement learning with the full empathic reward – with success criteria, failure modes, and data requirements.

- A cognitive state transition prediction model. We define a learned predictor that maps (conversation context, current cognitive state, utterance) to predicted next cognitive state, serving both as the prediction accuracy reward component and the mechanism for open-loop inference from novel, high temporal resolution, multidimensional data.

- The open-loop empathic transfer hypothesis. We propose and theoretically ground the hypothesis that LLM communication skills learned from brain feedback during training will persist at inference, even when EEG hardware is removed. We design a controlled experiment to test this hypothesis with suitable statistical analyses that, if successful, would enable deployment to users without neurological instrumentation or head-based wearables for equally empathetic and effective interactions.

- An empathic computing ethical framework. We provide an ethical framework that distinguishes empathic computing from persuasive computing through testable criteria, addresses informational power asymmetry, neurorights, mental privacy, and cognitive dependence. We also provide a 14-point review checklist for RLbF deployments.

- The Isaac platform. We describe the proof-of-concept implementation (Isaac) that realizes the closed-loop cognitive feedback architecture, including its dual-agent design as a data collection platform for aligned conversational-neurophysiological sessions and its proposed evolution toward a single post-trained empathic agent.

1.5 Paper Organization

This paper proceeds from motivation to mechanism to validation. Section 2 situates RLbF at the intersection of RLHF, affective computing, EEG-LLM systems, and EEG foundation models, clarifying why continuous cognitive-state feedback is appealing while decoder quality and interaction-coupled data remain bottlenecks. Sections 3 through 5 then move from theory to implementation: Section 3 formalizes the RLbF framework and reward structure, Section 4 presents the Isaac platform as the concrete implementation of that framework, and Section 5 lays out the three-phase training pipeline. Sections 6 and 7 turn from construction to testing. Section 6 defines the open-loop empathic transfer hypothesis, which is this paper’s central empirical claim, and Section 7 specifies the evaluation protocol designed to falsify or support it. Section 8 then addresses the ethical boundary conditions for any RLbF deployment, Section 9 discusses the broader implications and limitations of the framework, and Section 10 closes by summarizing RLbF as both an alignment proposal and a hypothesis-generating research.

2. Background and Related Work

RLbF draws primarily on five domains of prior work, summarized below, while identifying the limitations that RLbF addresses and distinguishing the proposed approach from existing methods that have led to the state-of-the-art LLMs today.

2.1 Reinforcement Learning from Human and AI Feedback

The modern paradigm of aligning language models with human preferences emerged from Christiano et al. (2017), who demonstrated that RL agents could learn complex behaviors from pairwise preference comparisons. The framework was extended to language by Ziegler et al. (2019), scaled by Stiennon et al. (2020), and consolidated in InstructGPT (Ouyang et al., 2022). Constitutional AI (Bai et al., 2022) and RLAIF (Lee et al., 2023) replaced human annotators with AI feedback. Direct Preference Optimization (Rafailov et al., 2023) simplified the pipeline further.

Despite their success, these approaches share the structural limitations described in Section 1.2: discrete, sparse, voluntary feedback at conversation-level granularity. RLbF replaces this with continuous, multidimensional neurophysiological signals at sub-second temporal resolution. At the same time, RLHF has several important advantages: it requires no specialized hardware, can aggregate preferences from thousands of diverse annotators, has an established empirical track record at scale, and directly trains for content quality, including helpfulness, truthfulness, and harmlessness. By contrast, RLbF is too new to claim validation at this scale; the capabilities proposed here remain theoretical and require empirical testing. RLbF is therefore best understood as complementary to, not a replacement for, content-quality training. In this framing, RLHF helps models learn what to say, while RLbF may help them learn how to say it.

2.2 Affective Computing and Neurofeedback

Affective computing (Picard, 1997; Calvo and D’Mello, 2010) established the broader premise that computational systems can detect and respond to human affective state. In educational settings, this line of work informed affect-aware tutoring systems, where adaptation to learner emotion and engagement has been associated with improved learning outcomes (D’Mello and Graesser, 2012). However, prior work uses rule-based or heuristic adaptation mechanisms – hard coded mappings from detected states to predefined strategies. RLbF differs fundamentally: the model learns its adaptation strategy through optimization, discovering effective communication patterns rather than having them programmed.

Closed-loop systems using neurodata (Sitaram et al., 2017; Muhl et al., 2014) have demonstrated that continuous involuntary neural signals can drive real-time systems, but they adapt stimulus parameters (task difficulty, reward thresholds), not generative language. A conceptual precursor to RLbF’s use of neural signals as RL reward can be found in BCI adaptation driven by error-related potentials (ErrP) (Fidencio et al., 2025), wherein ErrPs serve as reward signals for reinforcement learning in motor BCI control. This work demonstrates the viability of brain computer interface (BCI)-mediated content adaptation, including the Arctop empathic computing platform that uses real-time brain-state (cognitive state, in our terminology) decoding to modulate content delivery (Furman and Kwalwasser, US20210390366A1, 2021). RLbF extends this from content modulation to content generation: teaching a language model to produce different text as a function of the listener’s cognitive state through post-training.

2.3 Recent EEG-LLM Integration Efforts

The EEG–LLM interface literature is still emerging. Zhang et al. (2026), for example, introduce a brain-LLM interface that uses EEG-derived satisfaction estimates to guide image generation at test time. This is among the closest existing work to RLbF, but the proposed framework differs in a fundamental respect: Zhang et al. use EEG as an inference-time control signal, whereas RLbF proposes using EEG during training as a continuous reward source for shaping language generation. Relatedly, their approach is organized around user-satisfaction estimation, while RLbF proposes a richer, multidimensional cognitive-state feedback mechanism for reward shaping over time.

ARIEL (Sorino et al., 2024) was a working BCI+LLM system that used real-time EEG-based emotion recognition to guide an LLM conversational agent for emotional support. When the emotion recognizer detected a negative emotional state from EEG signals, ARIEL initiated a supportive dialogue, with the LLM’s behavior steered via role-play prompting that incorporated the recognized emotion label. ARIEL demonstrated that EEG-driven LLM interaction is feasible, but it differed from RLbF on three axes: (a) it operates at test time via prompt injection rather than at training time via reward shaping – the LLM weights are unchanged by the EEG signal; (b) it classifies EEG into discrete emotion categories rather than decoding continuous multi-dimensional cognitive state; and (c) it uses rule-based prompt formatting to steer the LLM rather than learned RL adaptation. RLbF’s contribution is the shift from test-time prompting to training-time optimization, enabling the model to internalize empathic communication patterns rather than relying on external prompt engineering.

A related line of work uses neural data to inform LLM fine-tuning: brain-informed alignment approaches (Bilgin et al., 2026) leverage fMRI and EEG features to guide supervised fine-tuning of language models. These methods differ from RLbF in that they use offline neural data for supervised optimization rather than real-time neural signals as a continuous RL reward during post-training. Several surveys (Chandrasekharan and Jacob, 2025; Babu et al., 2025) and additional work on EEG-to-text generation (Mishra et al., 2024) and predictive communication (Caria, 2025) map the broader landscape. RLbF’s specific contribution is using multi-dimensional cognitive state as a novel, continuous reward signal for post-training.

2.4 EEG Foundation Models: A Systematic Review

The foundation model paradigm – pretraining a large model on unlabeled data, then fine-tuning for specific downstream tasks – transformed NLP and computer vision. EEG is, in principle, well suited to this paradigm: clinical archives contain tens of thousands of hours of unlabeled recordings, labeled EEG data is scarce and expensive, and the diversity of EEG applications (seizure detection, sleep staging, BCI control, emotion recognition, cognitive assessment) creates demand for a general-purpose representation. Yet EEG poses challenges with no analogue in text or images: high inter-subject variability, low signal-to-noise ratios, and extreme heterogeneity in recording setups (channel counts ranging from 2 to 256, varying electrode positions, different sampling rates).

We reviewed 12 papers published between January 2021 and April 2026, identified through systematic search of arXiv, PubMed, IEEE Xplore, and Semantic Scholar, and supplemented by citation chaining from benchmark papers. Inclusion required that a paper perform self-supervised pretraining on EEG data and demonstrate transfer to at least one downstream task. The review employed an AI-assisted iterative process over three research cycles (initial taxonomy construction, cross-reference analysis with gap identification, and validation with refinement), with human oversight at cycle boundaries to verify key findings; a limitation of this methodology is reliance on available text rather than experimental reproduction. The 12 papers comprise: eight core EEG foundation models (BENDr, BIOT, LaBraM, EEGPT, CBraMod, EEGMamba, NeuroLM, ZUNA), two benchmark studies (EEG-FM-Bench, Xiong et al., 2025; EEG-Bench, Kastrati et al., 2025), one systematic evaluation (“Are EEG Foundation Models Worth It?”, Yang, L. et al., 2026, ICLR 2026), and one critical survey (Kuruppu et al., 2025). Per-model summaries are provided in Appendix A.

2.4.1 Taxonomy

We organize the landscape along four dimensions.

Architectural backbone. Transformers dominate (LaBraM, EEGPT, CBraMod, BIOT), with emerging alternatives in state-space models (EEGMamba, bidirectional Mamba with linear O(n) complexity), diffusion autoencoders (ZUNA, 380M parameters trained on 208 datasets), and multimodal LLM hybrids (NeuroLM, GPT-2 backbone with VQ-tokenized EEG input, up to 1.7B parameters). BENDr uses a hybrid CNN encoder feeding into a transformer. No pure CNN-based EEG foundation model exists.

Pretraining objective. Six strategies have been explored: contrastive learning (BENDr, BIOT – the earliest approach, now largely superseded), masked reconstruction with raw signal targets (CBraMod, EEGMamba), masked reconstruction with discrete VQ-code targets (LaBraM, inspired by BEiT v2), masked reconstruction with representation alignment targets (EEGPT, which addresses low EEG signal-to-noise ratio by predicting high-SNR reference representations), autoregressive next-token prediction (NeuroLM), and diffusion denoising (ZUNA). The field has converged heavily on masked reconstruction variants: seven of ten models surveyed (including the critical survey’s broader count) use some form of masked reconstruction with a transformer backbone, adopted from NLP without controlled evidence that this paradigm is optimal for EEG.

Scale. Parameter counts span three orders of magnitude (BIOT at 3.3M to NeuroLM-XL at 1.7B). Pretraining data ranges from BENDr’s approximately 1,500 hours to ZUNA’s approximately 2 million channel-hours from 208 datasets. A critical finding, discussed below, is that neither parameter scale nor data scale reliably predicts downstream performance.

Tokenization and channel encoding. How raw EEG signals are converted into model input tokens is a fundamental design choice that determines how the model handles channel heterogeneity – different datasets using different numbers of electrodes in different spatial configurations. Approaches have evolved through four generations: fixed channel assumptions (BENDr) that fail catastrophically on mismatched layouts; discrete learnable embeddings (LaBraM, BIOT) that work for seen configurations but cannot generalize; adaptive positional encoding (CBraMod, EEGPT); and geometric 3D coordinate encoding (ZUNA’s 4D-RoPE), which encodes the physical spatial coordinates of each electrode and enables generalization to arbitrary configurations including positions never seen during training. EEG-Bench found channel mismatch to be “devastating” for models with fixed channel assumptions.

2.4.2 Model Summary

| Model | Year | Architecture | Pretraining | Parameters | Independent Evals |

|---|---|---|---|---|---|

| BENDr | 2021 | CNN + Transformer | Contrastive | ~4M | 3 |

| BIOT | 2023 | Linear Transformer | Contrastive | 3.3M | 2 |

| LaBraM | 2024 | ViT | Masked (VQ codes) | 5.8M–369M | 3 |

| EEGPT | 2024 | ViT | Masked (repr. align) | ~10M | 2 |

| CBraMod | 2025 | Criss-cross Transformer | Masked (raw) | ~5M | 2 |

| EEGMamba | 2025 | Bidirectional Mamba | Masked (raw) | — | 0 |

| NeuroLM | 2024 | GPT-2 | Autoregressive | 254M–1.7B | 0 |

| ZUNA | 2026 | Diffusion Autoencoder | Diffusion denoising | 380M | 0 |

Three models (EEGMamba, NeuroLM, ZUNA) have zero independent benchmark evaluation. Only BENDr and LaBraM appear in all three benchmark studies. Self-reported results are systematically optimistic: authors select evaluation protocols and datasets that favor their models. The field’s conclusions about relative model effectiveness are therefore built on a partial and potentially biased evidence base.

2.4.3 The Representation Quality Problem

The most robust negative finding across all evaluations is the frozen-backbone collapse. When model weights are frozen and only a linear classifier is trained on top, performance drops to near-chance across all models and all pretraining strategies. This renders the whole approach powerless in obtaining the goals of an emergent system with superintelligent interaction skill:

- Frozen BENDr on SEED: 33.33% balanced accuracy (random chance for 3-class)

- Linear probing is “consistently weak across ALL foundation models” (Yang, L. et al., 2026)

- “Linear probing consistently underperforms fine-tuning, questioning whether current pretraining truly learns transferable high-level representations” (Kuruppu et al., 2025)

In NLP and computer vision, frozen features from foundation models are often sufficient for strong downstream performance, the defining property that makes foundation models useful. The universal failure of frozen EEG representations suggests something fundamentally different about what current models learn. Three explanations are plausible: (1) EEG has lower information density than text or images, and reconstruction objectives may learn to reproduce noise as faithfully as signal; (2) current objectives learn low-level spectral features rather than the abstract representations needed for downstream tasks; (3) pretraining data lacks diversity, with five of eight models pretraining primarily on Temple University Hospital EEG Corpus (TUEG) data.

We note that the magnitude of the collapse may be partly inflated by evaluation methodology. EEG-FM-Bench found that replacing simple linear probes with larger MLPs improved CBraMod by more than 10%, and EEGPT claims successful linear probing using a different protocol than the benchmarks. The qualitative finding – that frozen EEG representations are far weaker than their NLP/vision counterparts – is nonetheless robust.

2.4.4 Architecture and Objective Design Dominate Scale

Our most important comparative finding is that architecture and pretraining objective design dominate model scale as predictors of downstream performance.

The CBraMod-NeuroLM inversion. CBraMod at approximately 5M parameters matches or exceeds NeuroLM-XL at 1.7B on shared benchmarks – a 340× parameter disadvantage overcome by better architecture and pretraining design. NeuroLM-XL also underperforms LaBraM-Huge (369M parameters, approximately 2,500 hours of data) on TUAB abnormal detection (0.797 vs. 0.826 balanced accuracy) and TUEV event classification (0.468 vs. 0.662) despite having 4.6× more parameters and approximately 10× more pretraining data. These results, from the same first author (Wei-Bang Jiang), constitute the strongest evidence that the LLM-based approach sacrifices per-task performance for multimodal flexibility.

Within-architecture scaling works. LaBraM demonstrates clear improvement from Base (5.8M) to Large (46M) to Huge (369M). The anti-scaling evidence comes from cross-model comparisons where architecture and objective differ simultaneously.

A simple baseline matches complex models. The “Worth It?” paper’s deliberately simple ViT + MAE baseline (ST-EEGFormer) matches elaborate foundation models, suggesting that architectural complexity in pretraining may not be necessary.

The practical implication is that the NLP playbook of “scale solves everything” does not apply to EEG. This finding directly informs RLbF’s training pipeline design: the cognitive state transition predictor (Section 5.2) should prioritize design over scale.

2.4.5 Classical Methods Remain Competitive

EEG-Bench (Kastrati et al., 2025), the only dedicated clinical benchmark, reveals a striking pattern:

| Task | Best Classical | Best FM | Winner |

|---|---|---|---|

| Abnormal EEG | SVM: 0.722 | LaBraM: 0.838 | FM |

| Epilepsy | LDA: 0.531 | BENDr: 0.740 | FM |

| mTBI | LDA: 0.813 | LaBraM: 0.740 | Classical |

| Schizophrenia | SVM: 0.679 | Neuro-GPT1: 0.545 | Classical |

| Sleep Staging | LDA: 0.671 | LaBraM: 0.192 | Classical |

Foundation models win on tasks with large, balanced datasets from TUEG-like sources (abnormal detection, epilepsy), while classical methods with expert-engineered features (Common Spatial Patterns, neuroscience-informed spectral features) win on tasks with small samples, class imbalance, or non-TUEG data. This pattern is consistent with TUEG pretraining bias and with current pretraining failing to capture the domain knowledge encoded in decades of neuroscience research.

2.4.6 TUEG Dependency and Evaluation Fragmentation

Five of eight core models pretrain on TUEG data, and two of the most common benchmarks (TUAB, TUEV) derive from TUEG. This creates a hidden circularity: models are evaluated on data distributions they were pretrained on, making it impossible to separate pretraining quality from data familiarity. Only LaBraM (20 diverse datasets) and ZUNA (208 datasets) achieve genuine data diversity.

Evaluation protocols are not standardized across papers. Preprocessing pipelines, data splits, metric computation, and fine-tuning procedures all vary. EEG-FM-Bench found that classifier head architecture alone can change performance by more than 10%. Cross-paper performance numbers cannot be directly compared.

2.4.7 Implications for RLbF

These findings matter for RLbF because any RLbF system depends on an upstream cognitive-state decoder operating under the same constraints of heterogeneity, data bias, and limited transfer. RLbF therefore treats decoding as a separate component: in the near term, a specialized calibrated model may be sufficient; in the longer term, stronger EEG foundation models may become attractive backbones for these decoders. The immediate point is to use decoded cognitive-state estimates as a noisy continuous reward for language-model adaptation.

The survey also suggests a longer-term opportunity. If RLbF deployments generate large corpora of aligned EEG traces, decoder outputs, context windows, and outcomes from real interaction, they may eventually supply the kind of ecologically valid data that current EEG modeling lacks for interactive settings. This possibility is prospective rather than demonstrated here. Separately, the EEG foundation model field still needs controlled ablation of pretraining objectives, systematic representation diagnostics, unified clinical evaluation protocols, and exploration of under-tested architectures (state-space models for long recordings, diffusion representations for classification).

2.5 Comparison Summary and the Research Gap

The following comparison captures the key dimensions along which RLbF differs from prior approaches:

| Approach | Feedback Type | Temporal Resolution | Adaptation Mechanism | Generative? |

|---|---|---|---|---|

| RLHF (Ouyang et al., 2022) | Discrete preferences (voluntary) | Per-response | Post-training via reward model + PPO/DPO | Yes (LLM) |

| RLAIF (Bai et al., 2022) | AI preferences (synthetic) | Per-response | Post-training via AI reward model | Yes (LLM) |

| Affective tutoring (D’Mello and Graesser, 2012) | Facial expression, dialogue cues | Per-interaction (~seconds) | Rule-based strategy selection | No |

| Neurofeedback (Sitaram et al., 2017) | EEG/fMRI (involuntary) | Continuous (~sub-second) | Stimulus parameter adjustment | No |

| Content modulation (Furman and Kwalwasser, 2021) | Decoded EEG cognitive states | Continuous (~sub-second) | Content parameter modulation | No |

| Empathetic dialogue (Rashkin et al., 2019) | Text cues (voluntary) | Per-response | Supervised training on empathetic labels | Yes (LLM) |

| RLbF (this work) | Decoded EEG cognitive states | Continuous (~sub-second) | Post-training via neurophysiological reward | Yes (LLM) |

No existing approach combines (a) generative language models, (b) continuous involuntary neurophysiological feedback, and (c) post-training rather than rule-based adaptation. The empathetic dialogue row illustrates that text-only approaches can train generative models for empathic communication, but rely on voluntary text cues rather than involuntary neurophysiological signals: they learn to respond to what users say about their feelings, not to how users actually feel. This comparison highlights dimensions favorable to RLbF; on other dimensions – hardware requirement, training population size and diversity, empirical validation, and content quality training – RLHF is clearly superior (see Section 2.1). RLbF synthesizes elements from each prior approach that no single existing method integrates.

3. The Reinforcement Learning from Brain Feedback (RLbF) Framework

In this section we formalize the RLbF framework: the cognitive state space, the feedback loop, the temporal delay model, and the reward function. We’ve designed this framework to be empirically testable independently and in a variety of forms. Accordingly, all notation is defined with each component generating predictions that can be validated or disproven directly by data.

3.1 Cognitive State Space

We define the cognitive state at time t as a vector st = (st(1), …, st(d)) ∈ [0,1]d, where each dimension represents a distinct cognitive construct normalized to the unit interval. We define d = 5 dimensions based on established EEG correlates and real-time decodability: enjoyment (frontal alpha asymmetry — the difference in alpha-band (8–12 Hz) EEG power between left and right frontal regions, associated with approach motivation; Davidson, 1992), cognitive workload (frontal theta power — theta-band (4–8 Hz) EEG power over frontal regions, which increases with mental effort; Gevins et al., 1997), auditory focus (cortical tracking — the synchronization of neural activity to the temporal structure of attended speech; Ding and Simon, 2012; Mesgarani and Chang, 2012), flow state (increased frontal theta with moderate frontocentral alpha; Katahira et al., 2018; Csikszentmihalyi, 1990), and stress/arousal (elevated beta power — increased power in the beta band (13–30 Hz), associated with arousal and anxiety; Al-Shargie et al., 2016). The continuous [0,1] representation preserves gradient information essential for reward computation and the set is robustly extendable to additional dimensions.

Cognitive state measurements are subject to both inter-individual variation (handled by per-user calibration) and intra-individual drift from fatigue and habituation. The framework therefore requires some form of baseline normalization that separates slow drift from utterance-related changes: sresponse(τ) = s(τ) − sdrift(τ), where sdrift(τ) is an estimate of the slowly varying baseline. The practical severity of this challenge is substantial: frontal theta changes of 0.2–0.4 on the [0,1] scale over one-hour sessions due to fatigue drift alone (Gevins et al., 1997) may exceed per-utterance cognitive responses of 0.05–0.15 (estimated), making robust detrending essential. We note that linear subtraction of this kind is an idealized approximation; robustly solving the resulting covariate shift and disentangling transient event-related potentials from this baseline remains a critical open challenge for the field, which we detail fully in Section 9.3.

3.2 The Feedback Loop and POMDP Formulation

At each turn t, the agent observes (ct, st) (conversation context and cognitive state), generates utterance ut ∼ πθ(u | ct, st), delivers it, waits through a temporal delay δt, measures the post-utterance cognitive state st+1, and computes reward. This defines a Partially Observable Markov Decision Process (POMDP): the cognitive state vector is a low-dimensional projection of the user’s full internal state, and the agent acts under uncertainty about unobserved dimensions.

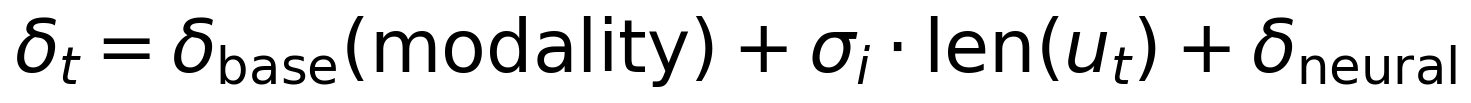

The temporal delay is modeled as:

where δbase is modality-dependent, len(ut) is measured in tokens, σi is a per-user processing speed factor (in seconds per token, where i indexes the user), and δneural is the neural processing pipeline latency (estimated at 1–3 seconds). Because cognitive responses are not instantaneous and the exact onset time is uncertain, we do not use the cognitive state at a single time point. Instead, the post-utterance cognitive state st+1 is defined as a weighted average over a Δ-second window following the estimated response onset:

where s(τ) is the continuous cognitive state signal at time τ after utterance delivery, Δ is the window duration (a hyperparameter, typically 10 seconds), and w(τ; t) ≥ 0 is a temporal weighting function. The specific shape of w(τ; t) is an implementation choice. The choice of Δ represents a deliberate trade-off between temporal precision and noise robustness.

For streaming voice mode, where overlapping utterances create entangled cognitive responses, we recommend paragraph-level reward assignment as the practical starting point, with per-sentence deconvolution as a research direction. Text-based turn-by-turn interaction uses the per-turn model directly.

The optimization objective is the undiscounted finite-horizon return over a conversation session: we sum rewards across turns without a discount factor, because conversations are finite interactions where later turns are not inherently less valuable than earlier ones.2

3.3 The Three-Component Reward Function

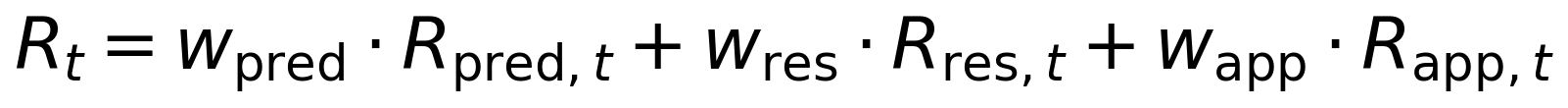

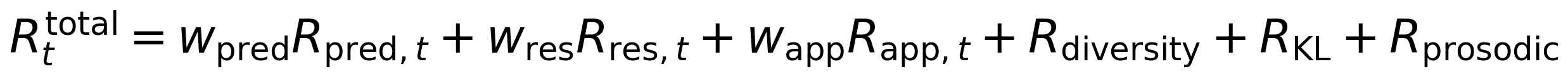

The reward at each turn is:

subject to wpred + wres + wapp = 1, wpred, wres, wapp ≥ 0.

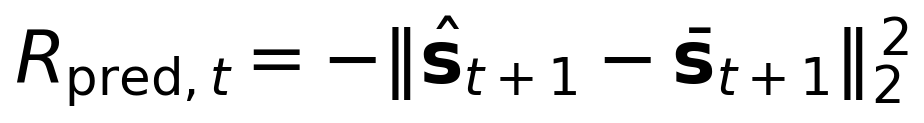

Prediction accuracy (Rpred) rewards the agent for accurately predicting cognitive state transitions. A learned predictor fϕ maps the current context, cognitive state, and utterance to a predicted next cognitive state ŝt+1 = fϕ(ct, st, ut):

This trains a computational analogue of empathy – an internal model of how words affect the listener. We note a subtle perverse incentive: the model could maximize prediction accuracy by steering toward predictable interactions. The resonance reward and Kullback–Leibler (KL) divergence penalties counteract this tendency, but monitoring for predictability-seeking behavior during training is recommended. As an additional countermeasure, we recommend normalizing Rpred by prediction difficulty – dividing by the variance of cognitive state transitions observed in similar conversational contexts – so that easy-to-predict interactions do not yield disproportionately high reward. This difficulty normalization should be treated as a recommended component of the Phase 3 training protocol rather than an optional enhancement.

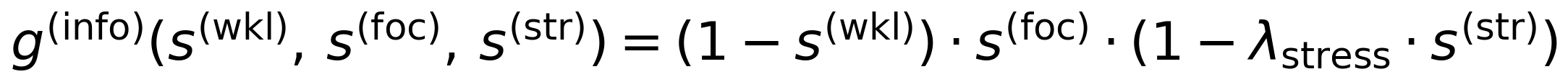

Cognitive resonance (Rres) rewards communication style adaptation through two concrete operationalizations. Information density matching reduces information density (measured as mean surprisal under a fixed reference language model, normalized to [0,1]) when workload is high, according to:

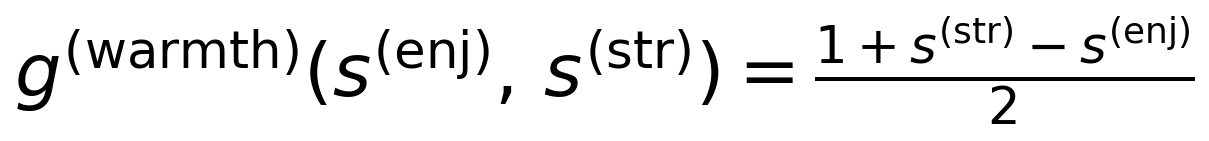

where s(wkl), s(foc), s(str) denote the workload, auditory focus, and stress dimensions of the cognitive state vector (Section 3.1), and λstress ∈ [0,1] is a stress-workload interaction modifier that distinguishes productive challenge from overwhelm. Emotional tone alignment targets a warmth level that increases when stress is high and enjoyment is low:

where s(enj) denotes the enjoyment dimension. When stress exceeds enjoyment, the target warmth rises above 0.5; during positive engagement (high enjoyment, low stress), the target drops, allowing the model to maintain stylistic stability during flow states. Both operationalizations are presented as testable hypotheses: the specific target functions are starting points grounded in Cognitive Load Theory (Sweller, 1988; Bjork and Bjork, 2011), attentional control theory (Eysenck et al., 2007), and therapeutic alliance research (Horvath and Symonds, 1991; Barrett-Lennard, 1962), not settled definitions. The CLT grounding extends to the information density target itself: the “desirable difficulties” framework (Bjork and Bjork, 2011) predicts that moderate-to-high information density is beneficial when cognitive capacity permits, supporting the non-zero target implied by Eq. 5 when workload is low. We also note that the stress-workload interaction modifier λstress is the only cross-dimension interaction currently specified; whether additional interactions (e.g., between enjoyment and focus, or between flow and workload) improve the resonance reward remains an empirical question for future work on the joint target function design. A learned joint target function is the principled long-term solution.

Application-layer reward (Rapp) is an optional, context-dependent term for deployment-specific objectives (learning gain in tutoring, distress reduction in therapeutic support). The default is wapp = 0: pure empathic attunement with no externally specified goal.

The complete constrained reward (Eq. 6) extends the three-component reward (Eq. 3) with diversity, KL-divergence, and prosodic diversity penalties to prevent reward hacking (exploiting loopholes in the reward function to achieve high scores without the intended behavior) — including convergence on rhythmic patterns that entrain neural oscillations (Giraud and Poeppel, 2012), a concrete vulnerability given the auditory focus dimension’s sensitivity to prosodic structure.

The three-component reward design reflects RLbF’s structural differences from existing alignment methods. Of the eleven dimensions in which RLbF and RLHF diverge, the three limitations identified in Section 1.2 are the most fundamental: RLbF increases feedback density by orders of magnitude (1+ Hz versus one label per response) and replaces population-level aggregation with individual specificity. As discussed in Section 2.1, RLbF is complementary to RLHF, not a replacement: RLHF trains for content quality through socially constructed preferences that require deliberate judgment, while RLbF trains for communication style adaptation through involuntary cognitive signals.

With the mathematical framework established, the next step is its concrete realization: the Isaac platform, which implements the cognitive feedback loop, tokenizes the state space for LLM consumption, and supports both closed-loop and open-loop inference.

4. System Implementation: The Isaac Platform

4.1 The Isaac Architecture

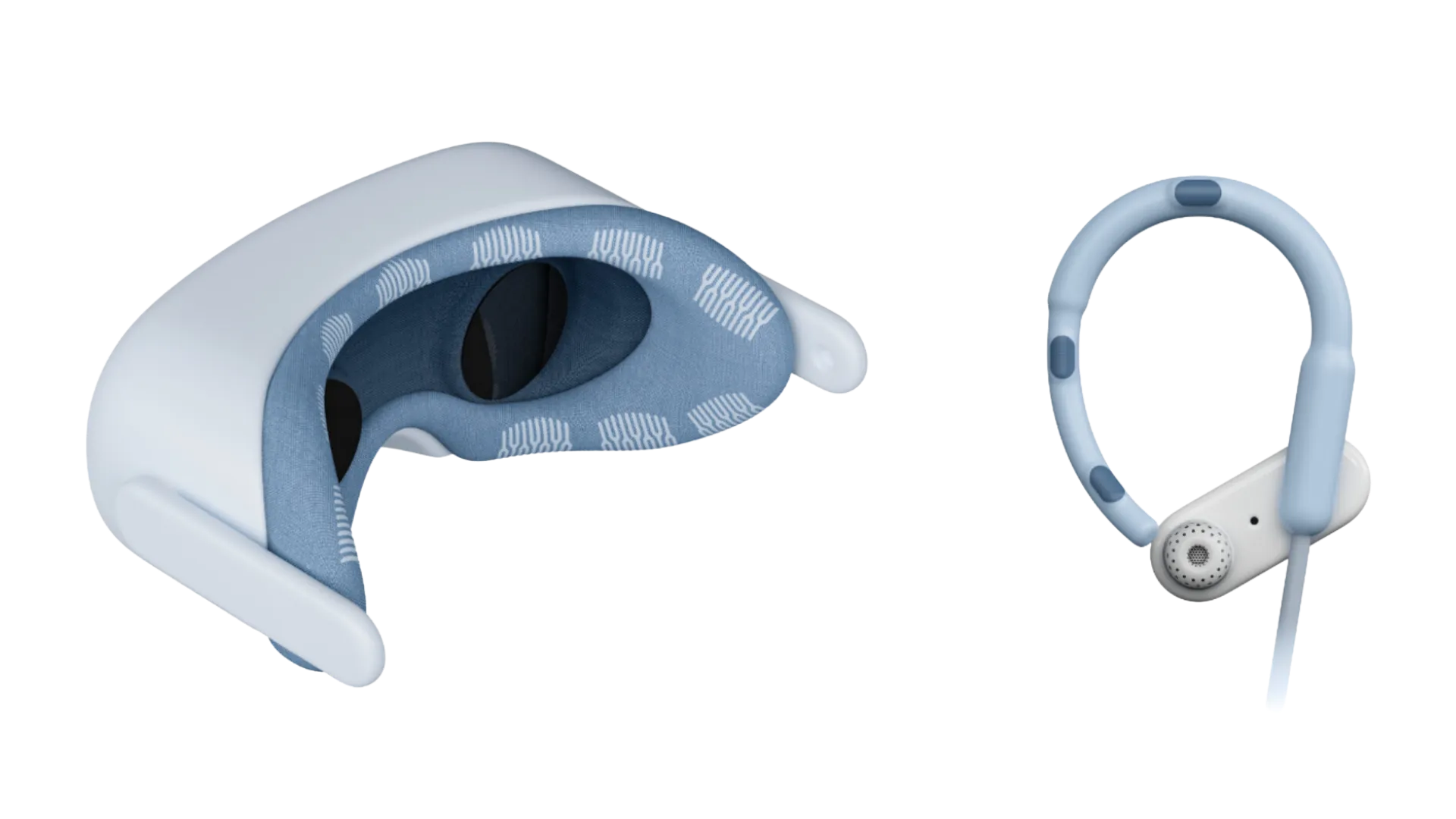

To realize the RLbF POMDP described in Section 3, we built Isaac, a platform that maps the abstract theoretical variables to a live software architecture. The system implements a closed loop: the user’s cognitive state, measured via EEG and decoded by Arctop’s on-device pipeline, feeds back into the language model’s context, shaping every subsequent utterance. Seven components form the cycle: the user’s brain produces neural activity; EEG hardware acquires raw signals; the Arctop on-device decoder transforms raw EEG into cognitive scores (enjoyment, workload, focus, flow, stress) emitted at 1 Hz, with raw EEG never leaving the device; a cognitive state tokenizer converts scores into a representation for the LLM; the LLM generates text conditioned on the augmented context; and a stream controller delivers text sentence by sentence, pausing between sentences to allow cognitive state updates. The sentence is the atomic unit of cognitive feedback, with an estimated minimum loop period of 3–5 seconds.

This design takes as inspiration the elegant formulations of late mathematician and natural philosopher Sir Isaac Newton, who in 1686 published his treatise on the three laws of motion the “Principia Mathematica Philosophiae Naturalis.” A fundamental insight was that (law #3) for every action in nature there is an equal and opposite reaction. For our current purposes with RLbF, a corollary is proposed to this physical law: when object ‘AI’ exerts a force on object ‘Brain,’ then object ‘Brain’ also exerts a force on object ‘AI.’

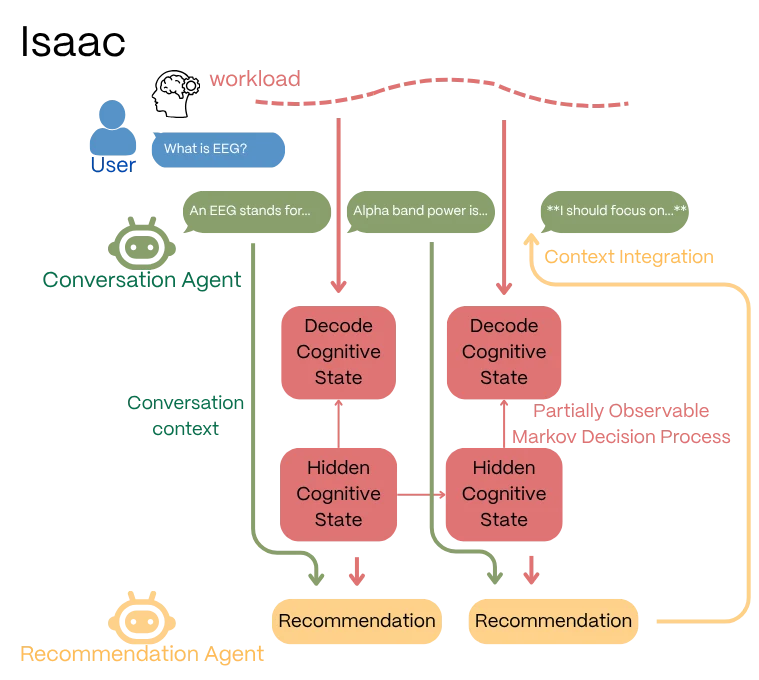

The software platform we use for exploration and testing related to this topic, ‘Isaac’ (Arctop Inc., 2026, v1.15. https://arctop.com/), implements a dual-agent architecture: an AI conversation agent that speaks with the human user, plus an AI recommendation agent that interprets cognitive state patterns from the human and advises the AI conversation agent, serving as a simulacrum of sorts for human neurobiologically-emergent and felt ‘empathy.’

The CognitiveTracker buffers scores, detects significant shifts, and computes trends. The RecommendationAgent generates natural language advisories (e.g., “Workload has risen sharply. Consider simplifying your next response.”). The StreamController manages sentence-level delivery. The SessionRecorder captures timestamped interaction data for RLbF training. This dual-agent pattern is a deliberate stepping stone and future versions follow several distinct branches to help flesh out the best architectures for this structure of contextual data.

It is critical to distinguish Isaac’s current proof-of-concept architecture from the final RLbF-trained model. The dual-agent structure is a prompt-based scaffolding designed to bypass the need for architectural modifications to the base LLM during initial data collection. By translating continuous cognitive scores into natural language advisories, the Recommendation Agent allows an off-the-shelf instruction-tuned model to simulate empathic adaptation. However, this is a transitional architecture. In the fully realized Phase 3 RLbF model, the policy is optimized directly against the mathematical, three-component reward (Rtotal). At that stage, the LLM internalizes the cognitive state mappings into its weights, rendering the Recommendation Agent obsolete for real-time inference and resulting in a lower-latency, single-agent system.

4.2 Signal Processing Engineering

Isaac’s signal-processing layer instantiates the abstract requirements defined in Section 3 with specific, modular engineering choices. For the baseline normalization in Section 3.1, Isaac estimates the slowly varying baseline sdrift(τ) as a 60-second moving average of the cognitive state signal, subtracted from the raw scores before reward computation and predictor training. This window is long enough to average out utterance-scale fluctuations but short enough to track fatigue and habituation drift. For the temporal integration weighting w(τ; t) in Eq. 2, Isaac uses a trapezoidal window with ramp-up and ramp-down periods, which avoids sharp boundary artifacts while remaining simple to implement; a truncated Gaussian centered at δt + Δ/2 is a principled alternative. Both choices are modular: the framework accommodates different baseline estimators and window shapes, and Section 9.3 details known limitations of linear-subtraction detrending that motivate more sophisticated transfer-learning approaches in future work.

4.3 Cognitive Tokenization via Prompt Injection

We recommend natural language injection as the primary tokenization strategy: cognitive state is injected as a natural language string (e.g., “Cognitive workload: 0.85 (high – approaching overload)”) into the LLM’s context between sentences. This requires no architectural modifications, preserves full numerical precision, supports transparency (the injected text is human-readable for auditing), and works with any instruction-tuned model. Learned embeddings are the principled long-term optimization for production systems, but natural language injection is preferred for the research phase.

4.4 Voice Mode vs. Text Mode

The architecture supports two delivery modalities with different temporal dynamics. Voice mode introduces TTS latency (200–800ms per sentence), fixed delivery rate (~150 words/min), and auditory processing dynamics where the auditory focus dimension is directly informative. Text mode eliminates TTS latency but introduces variable reading speed (200–400 words/min) and self-paced processing, making temporal alignment less precise. The LLM uses the same weights for both modalities; modality-specific adaptation is learned during RLbF training from interaction data, not hard-coded. A modality parameter affects the cognitive state tokenizer (labeling which dimensions are relevant), the stream controller (switching delivery mechanism), and the delay model parameters.

4.5 Individual Calibration

Users differ in baseline cognitive state patterns, cognitive responses to language, and processing speed. A brief calibration session (estimated at 5–10 minutes) at first interaction establishes individual baselines (resting-state measurement), response characteristics (controlled stimuli eliciting known cognitive patterns), and conversational processing speed. Two adaptation strategies are supported: a few-shot cognitive profile injected as natural language into the system prompt (default for new users), and lightweight parameter adaptation (LoRA – Low-Rank Adaptation; Hu et al., 2022 – of the transition predictor) for returning users with accumulated data.

4.6 Inference Modes

Both closed-loop (with EEG) and open-loop (without EEG) modes use identical model weights. The only difference is the source of cognitive state: observed scores from the Arctop decoder in closed-loop mode, or predicted scores from the internal transition predictor fϕ in open-loop mode. The cognitive state tokenizer checks whether an Arctop score stream is available; if yes, it uses observed scores; if no, it queries the internal predictor. The model does not know which source is providing the scores – it receives the same natural language injection format in both cases. This design enables smooth transitions between modes, including mid-session device removal, and makes trained empathic capabilities available to users without EEG hardware.

The Isaac platform described above provides the runtime infrastructure; what remains is the training procedure that transforms a standard instruction-tuned LLM into a model capable of exploiting this infrastructure for empathic communication.

5. Training Pipeline

The pipeline is designed to transform a standard instruction-tuned LLM into a model that natively understands and responds to real-time cognitive state signals through three phases of increasing complexity. Phases 1 and 2 use Isaac’s prompt-injected dual-agent scaffolding (Section 4) to generate and collect training data; Phase 3 then trains a single pure RLbF policy that replaces the scaffolding and natively optimizes against the reward math defined in Section 3.

5.1 Phase 1: Supervised Fine-Tuning for Cognitive State Comprehension

Phase 1 teaches the model to parse cognitive state injections and adjust communication style accordingly. This phase is necessary because standard instruction-tuned LLMs have no prior exposure to cognitive state tokens; without supervised grounding, the model would treat injected scores as noise rather than actionable context, undermining the reward signal in subsequent phases.

Synthetic data is generated through three layers. Template-based generation defines cognitive state profiles (overwhelmed, productively challenged, bored, in-flow, stressed-but-coping, relaxed) with expected communication adaptations grounded in the resonance reward’s target functions. LLM-augmented variation expands coverage to thousands of diverse (conversation, cognitive_state, response) triples, ensuring that the model encounters cognitive state signals across a broad range of topics, registers, and conversational depths. Isaac advisory bootstrapping grounds the synthetic distribution in real cognitive state patterns from existing session logs, mitigating the risk that purely synthetic data teaches the model to respond to cognitive state profiles that do not occur in practice.

Success criteria include cognitive state parsing accuracy (>85% agreement with an LLM judge), measurable style adaptation (information density decreasing by at least 15% under high workload), appropriate comprehension check insertion, and no degradation of general conversational quality (within 5% on standard benchmarks). Estimated data requirements: 15,000–25,000 training examples (these figures are preliminary estimates pending empirical calibration).

5.2 Phase 2: Prediction Calibration with Real EEG Data

Phase 2 solves the hardest technical challenge: learning to predict how utterances affect cognitive state from real Isaac session recordings. This is the phase that grounds the framework in empirical reality – the transition from synthetic cognitive state profiles (Phase 1) to measured human cognitive responses. Its success determines whether the prediction accuracy reward (Rpred) and the open-loop inference mode (Section 4.6) are feasible: if the predictor cannot learn meaningful dynamics from real data, neither the reward signal nor the open-loop transfer hypothesis has a foundation.

An extraction pipeline processes raw recordings through sentence-level segmentation, drift detrending (60-second moving average subtraction), pre-utterance state measurement, post-utterance windowed aggregation, and quality filtering, producing aligned (ct, st, ut, st+1) tuples. Conservative temporal attribution truncates observation windows at the onset of subsequent utterances to prevent cross-sentence contamination. Quality filtering discards turns where EEG signal quality falls below a per-channel threshold or where the temporal gap between utterance delivery and state measurement falls outside the delay model bounds (Eq. 1), ensuring that the training set contains only reliably attributed cognitive state transitions.

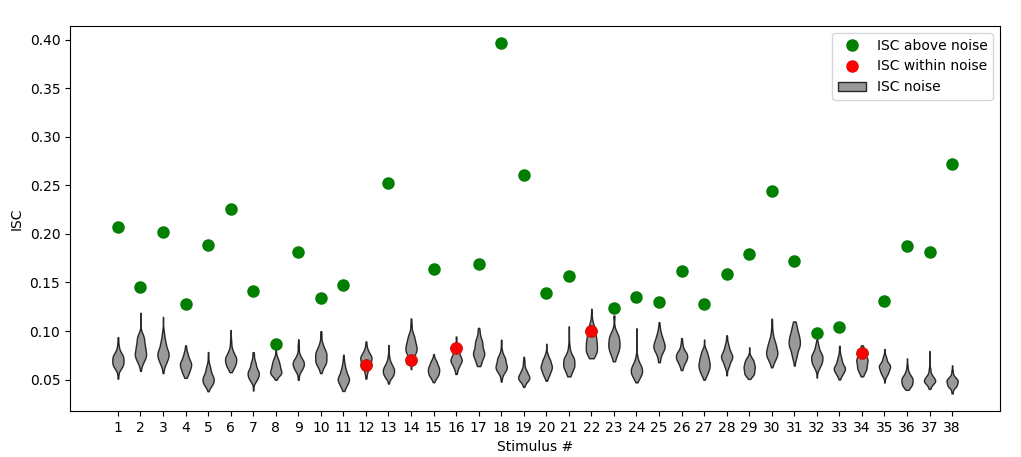

The transition predictor fϕ is trained on these tuples with population-level user embeddings that capture individual variation within a single model. Success criteria include per-dimension prediction correlation exceeding 0.3 for at least 3 of 5 dimensions on held-out users, outperforming the no-change baseline by at least 15%, and an autoregressive open-loop horizon exceeding 10 turns. We estimate the minimum data requirements to perform this sufficiently to be: 250–500 sessions from 30 unique and unrelated EEG-equipped users.

A significant challenge in predicting cognitive state transitions from text is the problem of temporal credit assignment: isolating which specific tokens within an utterance evoked which specific neurological response. Human cognitive states do not shift in response to structural filler words (e.g., ‘the’, ‘is’), but rather have their own unique timescales that anchor to semantically salient or high-arousal tokens. To solve this, we leverage the natural language logs generated by the Isaac Recommendation Agent during Phase 1 data collection. The Recommendation Agent’s historical advisories serve as a semantic mask during Phase 2 predictor training. By aligning the system’s structural understanding of high-impact words with the recorded temporal delays, the predictor learns to ignore syntactic filler and attribute cognitive state changes specifically to the high-arousal tokens within the context window. This prevents the model from smearing the predicted cognitive response uniformly across an entire sentence.

5.3 Phase 3: Reinforcement Learning with Empathic Reward

Phase 3 is the step at which the model leaves the Isaac scaffolding behind. In Phases 1 and 2 the target model sits inside Isaac’s prompt-injected dual-agent loop (Section 4.1), receiving RecommendationAgent advisories as part of its context. In Phase 3, PPO trains the model to operate as a single policy that reads the cognitive-state injection directly and natively optimizes against the reward math in Section 3, with the RecommendationAgent retained only at training time (as the semantic mask for the Phase 2 predictor, §5.2) and absent at inference.

Phase 3 optimizes the policy using Proximal Policy Optimization (PPO; Schulman et al., 2017) against the full reward function:

with the Phase 1 SFT (Supervised Fine-Tuning) model as the starting and reference policy and the Phase 2 prediction model providing Rpred.

We choose PPO over the DPO family (Rafailov et al., 2023) based on a specific analysis of RLbF’s requirements, not a generic preference. The DPO landscape has evolved substantially: KTO (Ethayarajh et al., 2024) eliminates the need for pairwise preference data by optimizing against a per-example utility function, online iterative DPO variants (Guo et al., 2024; Xu et al., 2024) address the original algorithm’s offline limitation, and reward-weighted regression methods can handle continuous reward signals. These advances make DPO-family methods viable for a wider range of alignment problems than the original formulation allowed. Nevertheless, three properties of the RLbF setting specifically favor PPO:

First, RLbF’s reward is a composite of six components (Rpred, Rres, Rapp, Rdiversity, RKL, Rprosodic) with different scales, noise characteristics, and update frequencies. PPO’s learned value function (which estimates expected future reward from a given state) can internalize the relative scaling and variance of these components, enabling stable optimization against the composite signal. DPO-family methods would require collapsing this structure into a single scalar or pairwise ranking before optimization, losing information about which reward components drive each comparison.

Second, the prediction model fϕ continues to update during Phase 3 as the policy generates novel utterances that fall outside the Phase 2 training distribution. This creates a non-stationary reward landscape where online exploration – generating utterances, observing their predicted cognitive effects, and updating the policy – is essential. PPO’s on-policy sampling (generating new training data from the current policy rather than reusing old data) naturally supports this co-evolution of policy and reward model. While online DPO variants address the static-dataset limitation of the original algorithm, they still optimize against pairwise preference rankings rather than directly against a continuous, multi-dimensional reward signal that itself evolves during training.

Third, RLbF requires credit assignment across sentence boundaries: a single utterance’s cognitive impact unfolds over a temporal window (Section 3.2), and the reward signal reflects the cumulative effect of multiple conversational turns on cognitive state trajectories. PPO’s value function learns to estimate long-horizon returns, enabling credit assignment (determining which earlier actions caused later outcomes) that connects current utterance choices to downstream cognitive outcomes. This temporal credit assignment problem does not map naturally onto the pairwise comparison framework that underlies DPO and its variants, including KTO’s per-example formulation.

The training supports both online (live Isaac sessions with real-time EEG) and offline (replaying Phase 2 session recordings with the prediction model) modes. In offline mode, the prediction model estimates cognitive state transitions for policy-generated utterances that differ from the recorded ones, introducing distributional shift (the mismatch between the training data distribution and the data the current policy generates) that limits the extent of offline optimization. A hybrid approach – offline pre-optimization to get a reasonable policy, followed by online fine-tuning with live EEG feedback – is recommended to balance data efficiency with the correction that real interaction provides. The recommended three-stage sequence and its performance-based transition criteria are:

- Offline RL warm-up (1–2 epochs over Phase 2 recordings): Establishes a baseline policy using conservative reward estimation.

- Hybrid online/offline (iterative): Short online sessions (15–30 minutes) with EEG-equipped users, combined with continued offline training. Gradually shifts the data distribution toward on-policy.

- Full online RL: Standard PPO training with live interaction for final optimization.

Transition criterion: offline to hybrid. Advance from offline-only to hybrid training when the offline-trained policy achieves Rres ≥ 1.10 × RresSFT (at least 10% improvement in resonance reward over the Phase 1 SFT baseline) on a held-out set of Phase 2 recordings, AND the mean estimated reward R̂total has plateaued (less than 2% relative improvement over the preceding 500 gradient steps). The first condition ensures the policy has learned nontrivially from offline data; the second ensures that offline returns are saturating and further improvement requires on-policy data.

Transition criterion: hybrid to full online. Advance from hybrid to full online training when two conditions hold: (1) the per-dimension prediction correlation of fϕ on the most recent online session batch meets or exceeds the Phase 2 baseline threshold (r ≥ 0.3 for at least 3 of 5 cognitive dimensions), confirming that the co-trained prediction model has adapted to the evolving policy distribution; and (2) the ratio of online-to-offline reward diverges by less than 10%, i.e., |Ronline − R̂offline| / Ronline < 0.10, indicating that the offline reward estimates have become unreliable enough that continued offline training adds diminishing value. At this point, the policy is stable enough for sustained live interaction and the reward signal quality justifies full online operation.

The KL divergence penalty coefficient βKL may require higher values (0.05–0.2) than typical RLHF settings because the cognitive state reward signal is noisier than human preference judgments. Training is monitored continuously for reward hacking indicators (Anthropic, 2025; Fu et al., 2025):

- Prosodic convergence

- Semantic diversity collapse

- Complexity collapse

- Question frequency spikes

- Warmth saturation

The specific values of constraint hyperparameters – including βKL, the diversity penalty weight, and the prosodic diversity coefficient are not fixed a priori but are to be calibrated empirically during Phase 3 training. Initial ranges are informed by the RLHF literature and the noise characteristics of EEG-derived reward, but final values should be determined through hyperparameter search on a validation set of held-out session recordings, with the reward hacking indicators above serving as diagnostic criteria for under- or over-regularization.

6. Open-Loop Empathic Transfer

Terminological note: In this section, we use “agent” in the decision-theoretic context (the POMDP actor) and “model” when discussing the underlying LLM; these refer to the same system.

6.1 The Hypothesis

The open-loop transfer hypothesis determines whether RLbF is a general-purpose training methodology or a niche technique for BCI users: does the benefit of brain feedback training persist when the EEG signal is removed at inference?

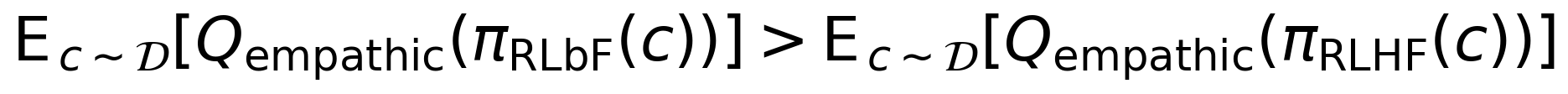

Formally, letting πRLbF denote the RLbF-trained policy and πRLHF denote a standard RLHF-trained policy from the same base model, the hypothesis claims that in open-loop deployment (no EEG):

where Qempathic is a composite empathic quality measure and 𝒟 is the evaluation task distribution. If true, RLbF becomes a general training methodology: train with brain feedback on a moderately sized population, deploy without brain feedback to all users. If false, RLbF is limited to closed-loop applications.

This claim is a hypothesis, not a demonstrated result. The experimental design below (Section 7.4) specifies the falsification criterion for Eq. 7.

6.2 Theoretical Grounding

Three independent perspectives support the hypothesis. First, the Arctop synthetic brain model (Furman and Kwalwasser, US20210390366A1) demonstrates in commercial deployment that models trained on paired (content, neural) data can predict from content features alone – the neural features serve as privileged information (Vapnik and Vashist, 2009; Lopez-Paz et al., 2016) during training. Second, RLHF generalization research shows that models trained with preference feedback generalize beyond their reward distribution (Kirk et al., 2024), and RLbF’s richer reward signal (Section 1.2) may provide favorable conditions for at least comparable generalization. Third, cognitive science research on theory of mind (ToM) (Premack and Woodruff, 1978; Baron-Cohen, 1995), including simulation theory accounts of mindreading (Gallese and Goldman, 1998; Goldman, 2006), shows that skilled human communicators develop internal models of their interlocutors through rich multimodal feedback, then apply these models effectively in feedback-impoverished settings.

6.3 Two Levels of Transfer

We distinguish style transfer (Level 1, likely) from state inference (Level 2, aspirational). At Level 1, the model has internalized population-level regularities about what kinds of language produce what kinds of cognitive responses – adaptive information density, emotional calibration, engagement preservation, comprehension checking. At Level 2, the model infers specific users’ cognitive states from conversational cues alone. Level 1 is the conservative claim; Level 2 requires the model to develop a genuine computational theory of mind and remains a research goal.

A caveat is warranted regarding the ToM analogy. Whether LLMs can develop genuine theory of mind – as opposed to surface-level behavioral mimicry of mentalizing – is scientifically contested (Ullman, 2023), and claims of emergent ToM in large models have faced significant methodological criticism. This debate bears directly on the plausibility of Level 2 transfer, which depends on the assumption that an LLM can learn to infer individual cognitive states from conversational cues in a manner analogous to human mentalizing. Level 1 transfer, by contrast, requires only that the model acquire statistical regularities between language patterns and population-level cognitive responses, a form of distributional learning that does not presuppose ToM. We therefore regard Level 2 as the more speculative claim in the framework and flag it as dependent on assumptions that remain open questions in the field.

6.4 The Sycophancy Counterargument

The most important counterargument is that the model has learned to be generically “nicer” rather than genuinely adaptive, producing warmer, simpler responses without sophisticated context-dependent adaptation. We identify this as “neurological sycophancy”: the displacement of deliberative sycophancy to the neural level, where the model optimizes for brain pleasure signals rather than genuine communicative benefit. Five ordered diagnostic tests are designed to distinguish empathic adaptation from generic niceness:

- Context-dependent complexity modulation

- Appropriate challenge (correcting errors despite comfort cost)

- Information density adaptation to inferred expertise

- Emotional specificity across different negative emotions

- Cognitive state trajectory prediction accuracy

6.5 Experimental Design

The open-loop transfer hypothesis is empirically testable through a controlled comparison of models trained with and without brain feedback, evaluated with and without EEG at inference. The experimental logic requires four conditions from the same base model: a Baseline LLM (no post-training), an RLHF-trained control (same base model fine-tuned with standard RLHF, isolating the post-training signal as the sole variable), an RLbF-trained closed-loop ceiling condition, and the critical test: an RLbF-trained model deployed open-loop (without EEG). The critical comparison is open-loop RLbF versus RLHF: if the open-loop RLbF model demonstrates superior empathic quality despite having no access to EEG at inference, this constitutes evidence that brain feedback training produces durable communication skills. Section 7.4 specifies the full experimental protocol, including the within-subjects Latin square design, sample size justification, statistical tests, and confound controls.

7. Roadmap to Empirical Validation

Our proposed evaluation framework is organized into four tracks, each targeting a different category of claims to be empirically tested. The protocol is specified at pre-registration quality: with each metric, statistical test, baseline, and sample size defined with enough detail for reproducibility. The protocol distinguishes pilot evaluations (feasible with current Isaac data, suitable for arXiv) from full evaluations (requiring dedicated recruitment, journal submission).

7.1 Track A: Prediction Accuracy

This track evaluates whether the cognitive state predictor fϕ learns useful dynamics of how language affects cognition. The test set comprises held-out Isaac sessions with strict user-level partitioning: no user appears in both training and test sets. The primary evaluation uses leave-one-user-out cross-validation across a minimum of 30 unique users, ensuring that prediction accuracy reflects generalization to new individuals rather than memorization of user-specific patterns.

Metrics include per-dimension mean absolute error (target: MAE < 0.10 on the [0,1] scale, representing the perceptual boundary between comfortable and effortful processing, and a meaningful improvement over the expected persistence-baseline MAE of approximately 0.12), Pearson correlation between predicted and observed cognitive state transitions (target: r > 0.5, anchored to the EEG-based affect recognition literature where models typically achieve r = 0.4–0.7; Koelstra et al., 2012), and change-based Δ-MAE (target: < 0.08). Four baselines establish the performance floor: random prediction, population mean, persistence (predicting no change), and linear regression on hand-crafted utterance features (token count, GPT-2 surprisal, VADER sentiment). Users contributing fewer than 3 sessions are excluded from the k-fold cross-validation to ensure sufficient within-user data. An open-loop accumulation test further assesses whether prediction accuracy degrades gracefully over multi-turn conversations or exhibits compounding error drift.

7.2 Track B: Self-Report Validation

This track validates perceived empathic quality and establishes convergent validity between self-report and EEG-derived measures. Four adapted instruments capture complementary constructs: the Working Alliance Inventory (WAI-SR) measures perceived rapport and collaboration, the Interpersonal Reactivity Index empathic concern subscale (IRI-EC) measures perceived empathy, the NASA Task Load Index (NASA-TLX) measures perceived cognitive demand, and a custom 5-item RLbF Adaptation Perception Scale measures perceived responsiveness to the participant’s cognitive state.

The WAI-SR and IRI-EC were originally validated for human-human therapeutic interaction (Hatcher and Gillaspy, 2006; Davis, 1983); early work adapted the WAI-SR for human-agent interaction (Bickmore et al., 2005), but psychometric properties of these instruments in modern human-AI contexts remain an open empirical question. Assessing construct validity, internal consistency, and factor structure of the adapted instruments is therefore a planned component of this study’s pilot phase, rather than an assumed given. Self-report vs. EEG correlations test ecological validity across five pre-specified dimension pairs (e.g., NASA-TLX Mental Demand vs. mean session workload, target: r > 0.30; IRI-EC vs. mean enjoyment trajectory slope). These thresholds are grounded in published EEG-self-report correlation data: NASA-TLX vs. EEG workload indices typically achieve r = 0.3–0.6 (Wobrock et al., 2015; Kamzanova et al., 2014), while EEG-affect vs. self-report correlations are typically lower (r = 0.15–0.35; Koelstra et al., 2012). Holm-Bonferroni correction is applied across the 5 convergent validity tests to control the family-wise error rate.

7.3 Track C: Cognitive Trajectory Analysis

This track tests whether RLbF-trained models produce measurably different cognitive state trajectories compared to non-adaptive baselines. Rather than evaluating single-turn responses, Track C examines the temporal dynamics of entire conversations, capturing how the model’s adaptive behavior shapes cognitive experience over multi-turn interactions.

Four trajectory-level metrics are computed for each session: cognitive state stability (variance of each dimension relative to the baseline condition reference variance), recovery speed from overload and stress episodes (time to return below a per-dimension threshold after exceedance), trajectory coherence (mutual information between utterance features and subsequent cognitive state changes, estimated via the Kraskov k-nearest-neighbors estimator with k = 5; Kraskov et al., 2004), and cumulative time in the productive zone. The productive zone is defined per dimension (e.g., workload between 0.30 and 0.75, stress below 0.60) based on Cognitive Load Theory thresholds where learning and engagement are optimized.

7.4 Track D: Open-Loop Transfer A/B Test

This track provides the definitive test of the open-loop transfer hypothesis (Section 6.1, Eq. 7) through a controlled within-subjects comparison of four conditions from the same base model: Baseline LLM (no post-training), RLHF-trained (same base model fine-tuned with standard RLHF on a general preference dataset, isolating the post-training method as the sole variable), RLbF-trained closed-loop (ceiling – full EEG feedback during interaction), and RLbF-trained open-loop (the critical test – brain-feedback-trained model deployed without EEG). The EEG device actively records data in all four conditions; only the real-time data feed to the model varies (see evaluation protocol for details). To achieve the target of 60 completers (15 full Latin squares of 4 conditions), 70 participants are recruited to account for an estimated 15% attrition rate. Each participant completes four 35-minute conversational sessions across different topics drawn from a standardized pool with random assignment.

Eight pre-registered hypotheses are tested with repeated-measures ANOVA. Directional hypotheses (H1–H5, H7) are tested with one-tailed paired t-tests, as the predicted direction is specified a priori and the reverse direction is not theoretically meaningful. Hypotheses H1–H4 and H7–H8, which test closed-loop RLbF superiority over baselines, form one correction family (6 pairwise comparisons) with Holm-Bonferroni correction. The critical open-loop transfer hypothesis – that open-loop RLbF outperforms RLHF on the composite empathic quality measure – is tested as a separate, pre-registered primary hypothesis at α = 0.05 (uncorrected). This separation is justified because the open-loop comparison tests a conceptually distinct claim (whether brain-feedback training produces durable skills that transfer without EEG at inference) from the closed-loop superiority hypotheses (whether real-time EEG feedback improves communication quality). Testing it within the closed-loop family would penalize the study’s most novel claim for sharing a correction family with hypotheses it neither depends on nor competes with.