Five Levels of Explanation (Part I): How Brain-Computer Interfaces Work

An in-depth exploration of brain-computer interface technology in five levels of difficulty. This is levels 1 through 3.

What do you think of when you read “Brain-Computer Interfaces” in the title? Elon Musk’s Neuralink? Or perhaps you imagined interacting in a virtual world like Neo in “The Matrix” or controlling a Na’vi body in “Avatar”? Media, science-fiction novels, and movies have popularized the concept of “brain interface” technology (for inspiration, consider this list of BCI in fiction), but they have also often led to misunderstandings. Our company prefers the term “Cognition Technology.” We’ll leave that for a later post.

The field of brain-computer interfaces (BCI) – though deeply rooted in science fiction stories and fantasy – is now a solidly established technical field which blends hard science and applied engineering, and is rapidly becoming a mainstream consumer technology after decades of research. BCI is jumping out of the lab and into ubiquity, just as this year the technical field celebrates the 50th anniversary of the coining of the term “BCI” by UCLA researcher Jacques Vidal in 1973 (Vidal 1973).

What is BCI?

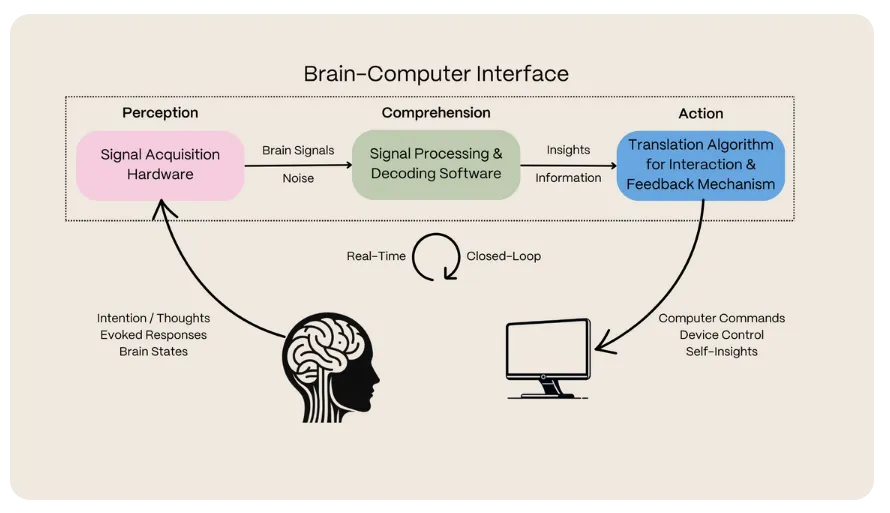

A brain-computer interface is a system that measures brain activity, decodes patterns with software, and translates those signals into useful outputs such as metrics, commands, or contextual feedback. Modern BCIs pair non-invasive sensors—like EEG headphones or earbuds—with AI models that run on edge or cloud infrastructure. The goal is to turn cognitive state, intent, or biometric signatures into actionable data in real time while keeping the experience comfortable and safe for everyday use.

Every BCI pipeline follows the same loop: sense neural signals, process them with algorithms, and deliver feedback to the user or attached device. Improvements in wearable hardware, signal processing, and machine learning are what make Arctop’s cognition metrics and developer tools possible without implants.

In this post, we aim to help the curious understand what BCI is, explore its capabilities, debunk overhyped claims, and understand its potential impact on our society. Consider this as an invitation to join us in contemplating the future of this technology, its boundaries, emerging opportunities, and how you can be a part of it.

Finding answers to these questions is not an easy task. Even among the BCI community, there is disagreement on priorities, opportunities, and capabilities. It’s also crucial to differentiate between theoretical possibilities and practical limitations. Just because something can work in theory does not mean it will work in practice.

It’s vital for us, amidst this technological surge, to establish common ground for future conversation that extends beyond research labs and pop culture, to addressing humanity’s broader mission to better itself and possibly co-adapt with artificial intelligence.

In this spirit, akin to WIRED’s style, we’ll explain brain-computer interfaces in five levels of difficulty. We hope you find reading it both fun and enlightening! Let’s dive into levels 1 through 3.

Level 1: Child

Imagine telling a computer what to do just by thinking! That’s what BCI is about.

You don’t have to use your hands to type, press any buttons, or even talk. Just put on a cool gadget on your head, like a headband or headphones or special earbuds and these BCI gadgets can “hear” what your brain is thinking and follow your commands.

What you can do with this “magic” is only limited by your imagination!

Level 2: Teen

BCIs offer a revolutionary way to interact with computers by translating your brain’s impulses into commands. You think about an action, and it happens. All you need to do is to wear a special sensor. BCI sensors can even pick up on your emotions, allowing different apps to personalize your experience in amazing ways. Want to search the Internet, send a message, or play your favorite song? Simply think about it.

While this might sound like something out of a sci-fi movie, the past decade has seen incredible strides forward in this field. A blend of advanced scientific knowledge, engineering capabilities, and software development has turned what once seemed like a distant dream into reality.

Let’s try to understand BCI with a fun analogy. Imagine a football stadium filled with people. Now, picture your brain as this stadium. A bit weird, but you got it, right? If not, the AI-generated image below might help.

In this scenario, each person in the stands is like a single brain cell, called a neuron. As they watch the game, they cheer, shout, chant, holler and sing based on what’s happening on the field. BCI sensors capture your brain’s activity similar to how microphones placed outside the stadium would pick up the crowd’s reactions.

Of course, from these “outside” measurements, there are limits. You can’t hear what everyone is saying, but you can definitely get the general vibe of the game and what the score is. A loud roar might mean a goal; sudden gasps could indicate a surprising play. You might also pick up from the recordings a “wave” ripple through the crowd or the building excitement during a tense play that ends with one team’s fans singing their anthem.

Similarly, while a BCI can’t decipher every neuron’s activity or every single thought in your brain, it can detect changes and patterns in your brain at a level that reflects your thoughts and moods - like whether you’re focusing, feeling sleepy, or thinking about moving your hand. Think of it like this: the microphones outside can capture big moments in the game just like BCI sensors can pick up the broader patterns in your brain.

You might be wondering, ‘if BCI can do so many things, why aren’t my friends using it yet?’ The best answer we have is that, like the Apple Vision Pro, it’s right around the corner. Thanks to advancements in scientific knowledge, better sensors, and more powerful computers with AI, new BCI products are starting to emerge. For now, and for a variety of reasons, BCIs are currently in use by relatively few people: mostly developers, researchers or individuals with disabilities. For example, they help people who’ve lost the ability to speak or move due to a stroke or certain diseases.

Level 3: College Student

“The most profound technologies are those that disappear. They weave themselves into the fabric of everyday life until they are indistinguishable from it.”

Mark Weiser, 1991.

BCI, originally developed for medical use, is rapidly evolving into a significant technology for everyday life. A typical BCI system includes three components: the hardware that measures brain activity, the software that interprets this data, and the mechanism that enables interaction with external device(s) and provides user feedback (proposed by Wolpaw et al 2002, one of the foundational papers that pioneered BCI). You can liken these components to the processes of perception, comprehension, and action – what a computer or AI agent needs to interact with you.

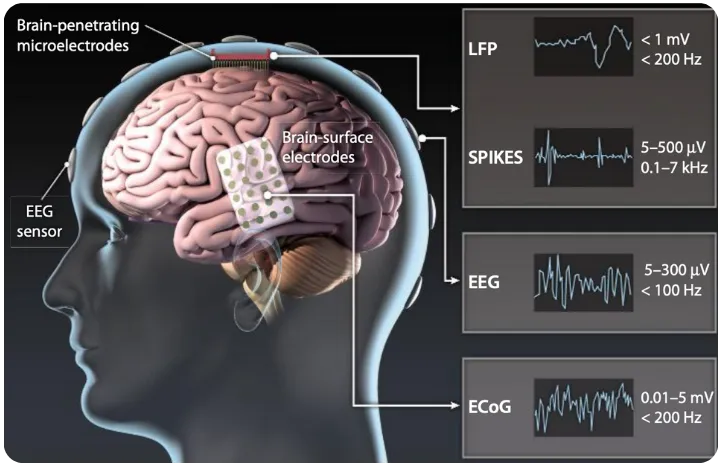

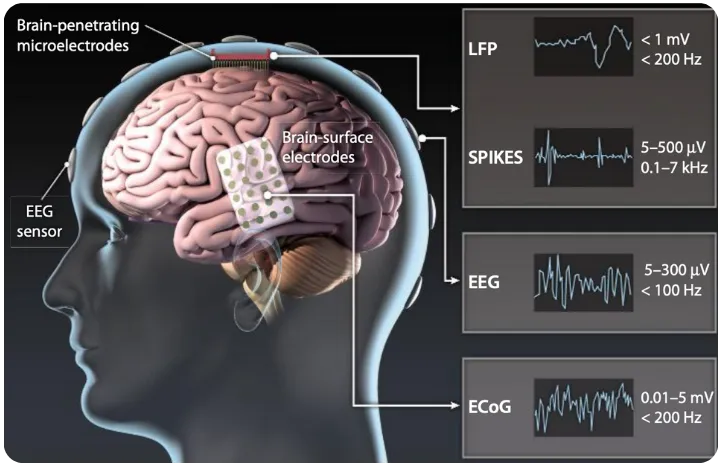

There are various brain-sensing modalities and corresponding hardwares to measure brain activity. These include electroencephalography (EEG), electrocorticography (ECoG), and local field potential (LFP) for electrical activity (see the image below); magnetoencephalography (MEG) for magnetic activity; and functional magnetic resonance imaging (fMRI) and functional near-infrared spectroscopy (fNIRS) for blood oxygenation (see Table 1 in Saha et el 2021 for a summary and comparison across these modalities). Among these, EEG – which records the brain’s electrical activity by placing sensors on your scalp – is most prevalent in BCIs due to its safety, comfort, fast tracking of the dynamic brain, and affordability.

Revisiting our football stadium analogy, consider BCIs from EEG sensors outside the head to be similar to microphones placed outside the stadium. One way to glean more information about what is happening inside is you can increase the number of microphones (EEG sensors) around various parts of the stadium (the brain). This strategy, “increasing spatial sampling,” enhances the detail and robustness of brain activity data, and correspondingly enhances the decoding capabilities of the BCI system. With more comprehensive data, BCI developers can employ sophisticated brain signal processing and machine learning techniques to decode and understand more intricate patterns of brain activity.

Now let’s delve into what BCIs can actually decipher from brain activity measured via EEG. This technology, akin to an EKG for the brain, has been around for nearly 100 years. EEG continuously captures the brain’s activity in tiny voltage fluctuations on the skin, reflecting various experiences – from sensory reactions and movement intention to cognitive processes like attention, memory, and decision making.

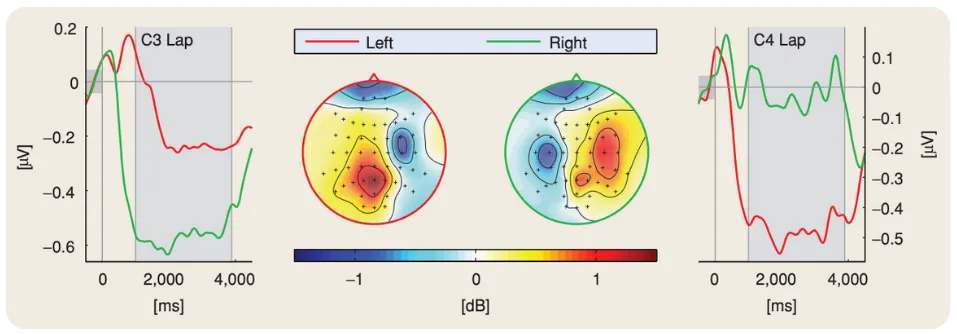

For instance, when you look at a flashing light, your brain’s visual area (occipital lobe) produces oscillatory activities (steady-state visual evoked potentials, or SSVEPs) that reflect the light’s flashing frequency. This phenomenon is often described as the brain “entraining” to the stimulus. Similarly, rhythmic sounds produce corresponding activities (auditory evoked potentials, AEPs) in the auditory area (temporal lobe). These responses not only mirror the beats but also the melodies and emotional content in the music. Even imagining movements, like thinking about moving your left arm, induce identifiable changes (event-related desynchronization, ERD) in the motor planning area (parietal lobe). These diverse signals, all detectable by EEG, have been instrumental in advancing BCI applications over the last two decades.

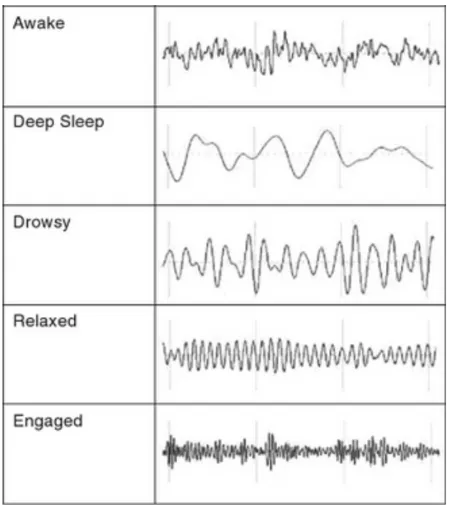

EEG can also reveal different brain states. For instance, closing your eyes induces an instant and distinct power increase in oscillations at around 10 cycles per second in your visual cortex. Sleep stages, from light to deep sleep, are marked by increasingly synchronized, broad, and slow-oscillating brain activities (known as slow waves, oscillating at just a few cycles per second). Interestingly, the EEG patterns during dreaming resemble those of wakefulness. Recent studies have further expanded EEG’s scope, demonstrating its ability to capture various mental states, from changes in attention and emotional states to varying levels of drowsiness.

The brain activities captured by EEG are subtle, often only a few microvolts in amplitude. They’re easily overshadowed by non-brain activities like muscle or eye movement, which can be ten or hundred times stronger. This complexity demands that BCI developers have a deep understanding of signal processing and machine learning to accurately interpret the faint EEG data, especially in real-time, real-world scenarios.

Over the past two decades, we have started to see more developments and applications for mainstream uses, from enhancing computer games and virtual-reality experiences, providing feedback to promote meditation training, tracking health metrics like sleep, and integrating into learning with content adapted to student’s mental states. In our next post, we’ll go into details at a graduate level into how BCIs work and are increasingly advancing into the fabric of life.

Levels 4 and 5 coming soon…

Brain-Computer Interface FAQ

- What is a brain-computer interface?

- A brain-computer interface (BCI) is a system that measures brain activity, decodes it with software, and turns those signals into outputs such as metrics, commands, or feedback for a connected device.

- Do BCIs require surgery or implants?

- Most consumer and enterprise BCIs today are non-invasive. They use wearable sensors like EEG headsets or earbuds to capture brain activity through the scalp, so no surgery is required.

- What can BCIs do today?

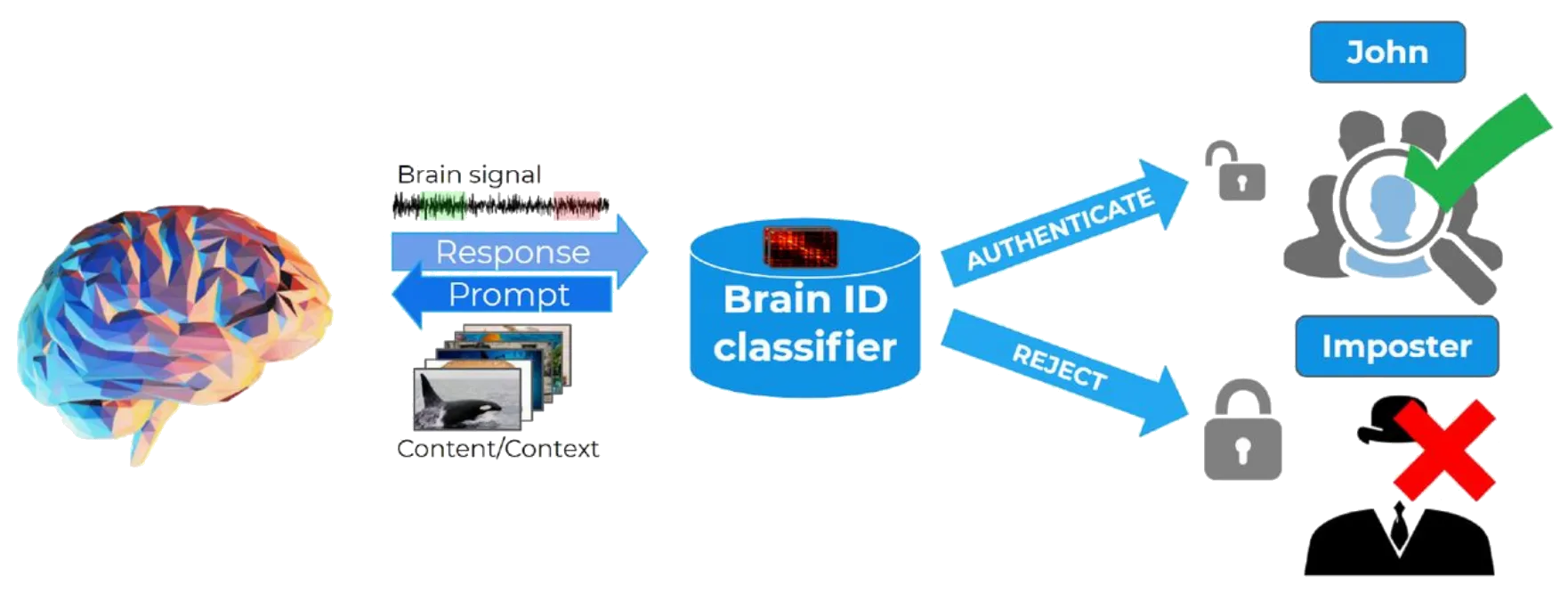

- Modern BCIs can monitor cognition metrics such as focus or engagement, enable adaptive software experiences, support accessibility use cases, and provide biometric authentication based on unique brain signal signatures.

References

- J. J. Vidal, "Toward direct brain-computer communication.," Annu. Rev. Biophys. Bioeng., vol. 2, pp. 157–80, Jan. 1973. https://www.annualreviews.org/doi/10.1146/annurev.bb.02.060173.001105

- Jonathan R Wolpaw, Niels Birbaumer, Dennis J McFarland, Gert Pfurtscheller, Theresa M Vaughan, "Brain–computer interfaces for communication and control", Clinical Neurophysiology, Volume 113, Issue 6, 2002, Pages 767-791, ISSN 1388-2457, https://doi.org/10.1016/S1388-2457(02)00057-3.

- Saha Simanto, Mamun Khondaker A., Ahmed Khawza, Mostafa Raqibul, Naik Ganesh R., Darvishi Sam, Khandoker Ahsan H., Baumert Mathias, "Progress in Brain Computer Interface: Challenges and Opportunities", Frontiers in Systems Neuroscience, Vol 15, 2021, https://www.frontiersin.org/articles/10.3389/fnsys.2021.578875

- Nitish V. Thakor, "Translating the Brain-Machine Interface." 2013. Science Translational Medicine, Vol 5 No 210, Available at https://www.science.org/doi/10.1126/scitranslmed.3007303

- B. Blankertz, R. Tomioka, S. Lemm, M. Kawanabe, and K. Muller, "Optimizing Spatial filters for Robust EEG Single-Trial Analysis," IEEE Signal Process. Mag., vol. 25, no. 1, pp. 41–56, 2008.

- T. Budzynski, H. Budzynski, J. Evans, and A. Abarbanel, "Introduction to quantitative EEG and neurofeedback: Advanced theory and applications." 2009.